The New Chatbot Race: Fastest Growth, Best Answers or Stickiest Users?

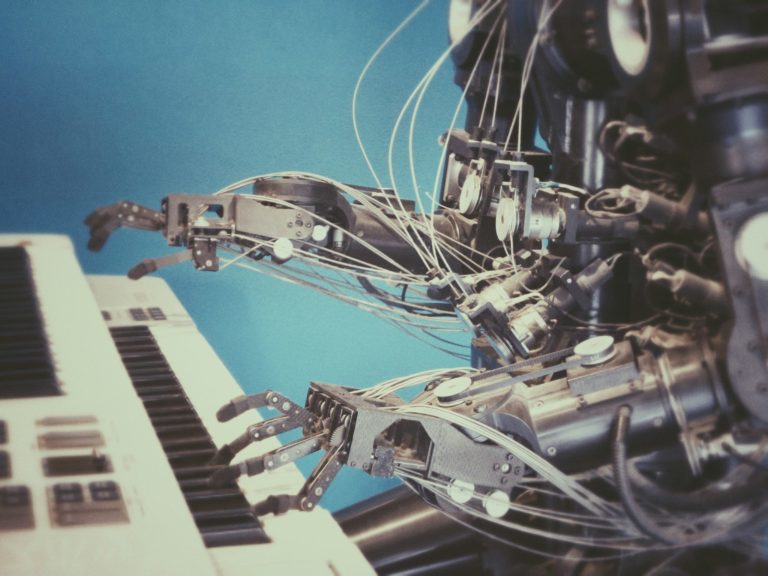

The era of measuring artificial intelligence solely by its ability to pass a bar exam or write a poem is over. We have entered a second phase of the assistant wars where raw intelligence is no longer the primary differentiator. Instead, the industry is shifting toward a battle for stickiness and integration. Major players are moving away from simple text boxes to create entities that can see, hear, and remember. This transition marks a departure from the static chatbots of 2026 and moves us toward persistent digital companions. The question for the average user is no longer which model is the smartest. The real question is which one fits most naturally into your existing habits and hardware. This shift is driven by a realization that a smart tool you forget to use is less valuable than a slightly less capable tool that is always present.

Beyond the Search Box

The current competition focuses on three specific pillars: memory, voice, and ecosystem tie-ins. Early versions of chatbots were essentially amnesiacs. Every time you started a new session, the machine forgot your name, your preferences, and your past projects. Today, companies are building long-term memory systems that allow the AI to recall specific details about your workflow across weeks or months. This persistence transforms a search tool into a collaborator. Interface design has also moved beyond the keyboard. Low latency voice interaction allows for natural conversations that feel less like a query and more like a phone call. This is not just a gimmick for hands-free use. It is an attempt to reduce the friction of human-computer interaction to near zero.

Ecosystem integration is perhaps the most aggressive part of this new strategy. Google is weaving its Gemini models into Workspace. Microsoft is embedding Copilot into every corner of Windows. Apple is preparing to bring its own intelligence layer to the iPhone. These companies are not just trying to provide the best answers. They are trying to ensure you never have to leave their environment to get those answers. This creates a situation where the best chatbot is simply the one that already has access to your emails, your calendar, and your files. The confusion many users feel stems from the belief that they need to find the single most powerful model. In reality, the industry is moving toward specialized utility where the winner is the one that requires the least effort to access.

A Borderless Assistant Economy

The global impact of this shift is profound because it changes how labor and information move across borders. In many developing economies, these assistants act as a bridge to complex technical knowledge that was previously gated by language or education. When a chatbot can explain a legal document or a coding error in a local dialect with perfect nuance, it levels the playing field. However, this also creates a new form of digital dependency. If a small business in Southeast Asia or Eastern Europe builds its entire workflow around a specific AI memory system, switching to a competitor becomes nearly impossible. This is the new ecosystem lock-in that will define the next decade of global tech competition.

We are also seeing a shift in how information is consumed globally. Traditional search engines are being bypassed in favor of direct answers. This has massive implications for the global advertising market and the survival of independent publishers. If the AI provides the answer without the user ever clicking a link, the economic model of the internet breaks. Governments are already struggling to keep up with these changes. While the European Union focuses on safety and transparency, other regions are prioritizing rapid adoption to gain a competitive edge. This creates a fragmented global environment where the capabilities of your AI assistant might depend entirely on which side of a border you are standing on. The technology is no longer a static product but a dynamic service that adapts to local regulations and cultural norms in real time.

Living with a Silicon Shadow

Consider a typical day for a project manager named Sarah. In the old model, she would spend her morning toggling between five different apps to coordinate a product launch. She would search through old emails for a specific deadline and then manually update a spreadsheet. In the new model, her assistant has been listening to her meetings and has access to her message history. When she wakes up, she asks the assistant for a summary of the most urgent tasks. The AI remembers that she was worried about a specific vendor delay from three days ago and highlights that first. It does not just provide a list. It suggests a draft for an email to that vendor based on the tone she used in previous successful negotiations. This is the power of memory and context in action.

Later in the day, Sarah uses voice mode while driving to a client site. She asks the assistant to explain a complex technical change in the software architecture. Because the AI has low *latency*, the conversation feels fluid. She can interrupt, ask for clarification, and pivot the topic without the awkward pauses that defined earlier voice tech. She recieved a notification that the vendor responded and she asks the AI to summarize the attachment.

BotNews.today uses AI tools to research, write, edit, and translate content. Our team reviews and supervises the process to keep the information useful, clear, and reliable.

However, this level of integration brings a new set of frustrations. When the AI makes a mistake in this deeply integrated state, the consequences are higher. If a standalone chatbot gives a wrong answer, you ignore it. If an integrated assistant deletes a calendar invite or misinterprets a sensitive email, it disrupts your life. Users are finding that they need to develop a new kind of literacy to manage these assistants. You have to know when to trust the memory and when to verify the facts. The race for stickiness means these tools will become more assertive, often suggesting actions before you even realize you need them. This proactivity is the next frontier of the user experience, but it requires a level of trust that many users are not yet ready to give.

The Price of Total Recall

This move toward total integration raises difficult questions that the tech industry often ignores. What is the hidden cost of an AI that remembers everything? When a company stores your personal preferences and professional history to provide better service, they are also creating a permanent record of your life. We must ask who truly owns this memory. If you decide to leave a platform, can you take your AI’s memory with you? Currently, the answer is no. This creates a situation where your personal data is used as a tether to keep you paying a monthly subscription. The privacy implications are staggering, especially as these tools begin to process audio and video in the background to provide better context.

There is also the question of energy and sustainability. Maintaining a persistent, high-intelligence assistant for millions of people requires an enormous amount of compute power. Every time you ask your AI to remember a detail or summarize a meeting, a server farm somewhere consumes water and electricity. As we move toward a world where everyone has a silicon shadow, the environmental footprint of our digital lives will grow. We also need to consider the cognitive cost. If we delegate our memory and our planning to an AI, what happens to our own ability to organize and recall information? We are trading mental effort for convenience, but we do not yet know what we are losing in the process. Is the efficiency worth the potential atrophy of our own cognitive skills?

Have an AI story, tool, trend, or question you think we should cover? Send us your article idea — we’d love to hear it.Under the Hood of the Modern Assistant

For those who want to look past the marketing, the real competition is happening at the infrastructure level. Modern assistants are moving toward massive context windows, with some models now supporting over one million tokens. This allows the AI to ingest entire codebases or hundreds of pages of documentation in a single prompt. For a power user, this is a significant upgrade over the small snippets allowed in 2026. However, large context windows come with a trade-off in speed and cost. Developers are now focusing on RAG (Retrieval-Augmented Generation) to give models access to local data without needing to retrain the entire system. This allows for a more personalized experience while keeping the core model lean and fast.

API limits and latency are the new bottlenecks for power users. If you are building a custom workflow that relies on real-time voice or vision, the time it takes for a packet to travel to a cloud server and back becomes a critical factor. This is why we are seeing a push for local execution. Companies are developing specialized NPU (Neural Processing Unit) chips for laptops and phones to run smaller models locally. This provides better privacy and zero latency for basic tasks while offloading complex reasoning to the cloud. Local storage of AI embeddings is also becoming a standard for those who want to maintain their own memory banks without relying on a single provider. The geek section of the market is no longer just about which model has the highest benchmark score. It is about which model has the most flexible API, the most generous rate limits, and the best support for local-first workflows.

The Choice Ahead

The chatbot race has moved from a sprint for intelligence to a marathon for utility. We are no longer just comparing text outputs. We are comparing how these systems integrate with our hardware, how they handle our private data, and how they anticipate our needs. The winner of this race will not necessarily be the company with the most parameters. It will be the company that creates the most invisible and frictionless experience. As these assistants become more capable, the line between our digital and physical lives will continue to blur. One question remains unanswered: as these assistants become more human-like in their memory and voice, will we start to treat them as colleagues or will they remain just another piece of software? The answer will define our relationship with technology for the next generation.

Editor’s note: We created this site as a multilingual AI news and guides hub for people who are not computer geeks, but still want to understand artificial intelligence, use it with more confidence, and follow the future that is already arriving.

Found an error or something that needs to be corrected? Let us know.