The New Model Stack: Chat, Search, Agents, Vision and Voice

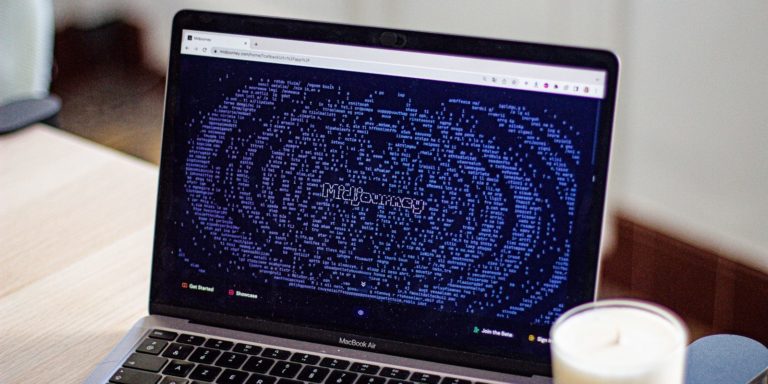

The End of the Ten Blue Links

The internet is moving away from the directory model that defined the last two decades. For years, users typed a query and received a list of websites. Today, that interaction is being replaced by a sophisticated stack of capabilities. This stack includes chat interfaces, real-time search, autonomous agents, computer vision, and low-latency voice. The goal is no longer to help you find a website. The goal is to provide the answer directly or complete the task on your behalf. This shift creates a massive pressure on click-through rates for traditional publishers. When an AI overview provides a perfect summary of an article, the user often has no reason to visit the original source. This is not just a change in technology. It is a change in the fundamental economy of the web. We are seeing the rise of answer engines that prioritize synthesis over navigation. This new model stack requires a different way of thinking about visibility. Being the first result on a search page is becoming less important than being the primary source for a model training set or a real-time retrieval system.

Mapping the Multi-Modal Ecosystem

The structure of this new environment is built on four distinct layers. The first layer is the chat interface. This is the conversational front end where users express intent in natural language. Unlike the rigid keyword structure of the past, these interfaces allow for nuance and follow-up questions. The second layer is the search engine, which has evolved into a retrieval system. Instead of just indexing pages, it now feeds high-quality data into large language models to ensure accuracy and freshness. This is where the tension between visibility and traffic becomes most apparent. A brand might be visible in an AI response, but that visibility does not always translate into a visit. The third layer consists of agents. These are specialized programs designed to execute multi-step workflows. An agent does not just tell you which flight is cheapest. It logs into the site and prepares the booking. The final layer includes vision and voice. These are the sensory inputs that allow the stack to interact with the physical world. You can point a camera at a broken engine and ask for a fix, or talk to your car while driving to summarize a long report. This integrated approach is replacing the siloed app experience. Users no longer want to jump between five different platforms to get one thing done. They want a single point of entry that handles the complexity in the background. This transition is moving the web toward a more proactive state. Information is no longer something you go out and find. It is something that is delivered to you in a ready-to-use format. This change is forcing every digital business to rethink how they signal their value to these systems.

The Economic Shift of Information Discovery

Globally, the impact of this new stack is felt most by those who rely on information arbitrage. Publishers, marketers, and researchers are facing a world where the middleman is being automated. In the old world, a user might click through three different blogs to compare the features of a new laptop. In the new world, a single AI overview pulls the data from those three blogs and presents a comparison table. The blogs provide the value, but the AI captures the attention. This creates a crisis for content quality signals. If publishers cannot get traffic, they cannot fund high-quality reporting. If high-quality reporting disappears, the models have nothing of substance to summarize. This circular dependency is one of the biggest challenges for the tech industry in 2026. We are seeing a move toward a zero-click reality. For businesses, this means that traditional SEO is no longer enough. They must optimize for being the definitive source that an AI trusts. This involves structured data, clear authority signals, and a focus on being the primary source of truth. The global audience is also seeing a shift in how they trust information. When a voice in your ear tells you a fact, you are less likely to check the source than when you see a link on a screen. This places an immense responsibility on the companies building these models. They are no longer just providing a map to the internet. They are acting as the oracle for it. This shift is happening at different speeds in different regions, but the direction is clear. The gatekeepers of the past are being replaced by the synthesizers of the future.

A Day with the Integrated Assistant

Consider a marketing manager named Sarah who is preparing for a product launch. In the past, Sarah would spend her morning opening twenty tabs. She would check Google for competitor news, use a separate tool for social media analytics, and another for drafting emails. With the new model stack, her workflow is consolidated. She starts her day by speaking to her workstation. She asks for a summary of the latest competitor moves. The system does not just give her links. It uses its search layer to find news, its vision layer to analyze competitor Instagram posts, and its chat layer to synthesize a report. Sarah then asks the agent layer to draft a response strategy based on her brand voice. The system pulls from her local storage to ensure the tone is consistent with previous campaigns. While she is driving to a meeting, she uses the voice interface to tweak the draft. She notices a typo in teh document but corrects it with a quick verbal command. This is not a series of disconnected tasks. It is a single, continuous flow of intent. Later, she needs to find a venue for a launch event. She points her phone camera at a potential space. The vision system identifies the location, pulls up the floor plan, and calculates the capacity. She asks the agent to check her calendar and send a booking inquiry to the venue manager. The agent handles the email and sets a reminder to follow up. Sarah has spent her day making decisions rather than performing manual data entry. This scenario illustrates the difference between visibility and traffic. The venue manager received an inquiry because Sarah was able to find and verify the space through her AI stack. The venue website might not have received a traditional hit from a search engine, but it gained a high-value lead. This is the new discovery pattern. It is less about browsing and more about execution. The friction of the old web is being sanded down by a layer of intelligent automation that understands context. This allows professionals to focus on strategy while the stack handles the logistics of information gathering and communication.

The Ethical Price of Immediate Answers

The move toward this integrated stack raises difficult questions about the cost of convenience. If users never leave the chat interface, how do we ensure the survival of the open web? We must ask if we are trading diversity of thought for speed of access. When a single model decides which information is relevant, it acts as a massive filter. This filter can introduce bias or hide dissenting opinions. There is also the question of privacy. For an agent to book a flight or manage a calendar, it needs deep access to personal data. Where is this data stored and who can see it? The energy cost is another hidden factor. Generating a multi-modal response requires significantly more compute power than a traditional keyword search. We are also seeing a shift in how we value human expertise. If an AI can summarize a legal document or a medical study, what happens to the professionals who spent years learning those skills? The risk is that we become overly dependent on a few large platforms that control the stack. These platforms hold the keys to how we see the world. We must consider the long term impact on our cognitive abilities. If we stop searching and start only receiving, do we lose the ability to think critically about the sources of our information?

BotNews.today uses AI tools to research, write, edit, and translate content. Our team reviews and supervises the process to keep the information useful, clear, and reliable.

The Technical Architecture of Modern Intent

For the power user, the new model stack is defined by its plumbing. The shift from simple API calls to complex RAG (Retrieval-Augmented Generation) workflows is the core of this evolution. Developers are no longer just hitting a GPT endpoint. They are managing sophisticated pipelines that connect local vector databases to live search results. One of the biggest hurdles is the API limit. As models become more integrated into daily workflows, the volume of tokens being processed is skyrocketing. This has led to a focus on local storage and edge computing. Users want their data to stay on their devices while still benefiting from the power of large models. This is where small language models come into play. They handle basic tasks locally to save on latency and cost, only reaching out to the cloud for heavy lifting. Context windows are also a critical metric. A larger context window allows the model to remember more of a conversation or a project history. However, as the window grows, so does the chance of the model losing focus or hallucinating. We are seeing a move toward more structured outputs. Instead of just returning text, models are now returning JSON or other machine-readable formats that agents can use to trigger actions. This is the bridge between talking and doing. The integration of vision and voice adds another layer of complexity. Processing video in real time requires massive bandwidth and low latency. This is why we see a push for specialized hardware that can handle these specific workloads. The goal is a seamless experience where the transition between typing, speaking, and seeing is invisible to the user. This requires a level of coordination between hardware and software that we have not seen since the early days of the smartphone.

Have an AI story, tool, trend, or question you think we should cover? Send us your article idea — we’d love to hear it.The Unresolved Future of Discovery

The transition to a multi-modal stack is not a finished process. It is a period of intense experimentation. We are currently in a state of confusion where users are not sure when to use a search engine and when to use a chat interface. This confusion will likely persist until the two experiences merge completely. The big question that remains is how the web will be funded in an era of zero-click searches. If the traditional ad model breaks, a new one must take its place. This might involve micropayments for data usage or a complete shift to subscription-based services. The only certainty is that the way we interact with information has changed forever. We are no longer looking for links. We are looking for solutions. The new model stack provides those solutions, but it does so at a price that we are only beginning to calculate. Whether this leads to a more informed society or a more siloed one is a question that only time will answer.

Editor’s note: We created this site as a multilingual AI news and guides hub for people who are not computer geeks, but still want to understand artificial intelligence, use it with more confidence, and follow the future that is already arriving.

Found an error or something that needs to be corrected? Let us know.