The Biggest Ethical Questions AI Still Cannot Escape

Silicon Valley promised that artificial intelligence would solve the most difficult problems of humanity. Instead, the technology has created a new set of friction points that no amount of code can fix. We are moving past the phase of wonder and into a period of hard accountability. The core issue is not a future machine uprising but the current reality of how these systems are built and deployed. Every large language model relies on a foundation of human labor and scraped data. This creates a fundamental conflict between the companies that build the tools and the people whose work powers them. Regulators in Europe and the United States are now asking who is responsible when a system makes a mistake that ruins a life. The answer remains unclear because the legal frameworks were not built for software that acts with this level of autonomy. We are seeing a shift in focus from what the technology can do to what it should be allowed to do in public life.

The Friction of Automated Decision Making

At its core, modern artificial intelligence is a prediction engine. It does not understand truth or ethics. It calculates the probability of the next word or pixel based on massive datasets. This lack of inherent understanding creates a gap between the output of a machine and the requirements of human justice. When a bank uses an algorithm to determine creditworthiness, the system might identify patterns that correlate with race or zip code. This is not because the machine is sentient but because the historical data it trained on contains those biases. Companies often hide these processes behind proprietary secrets, making it impossible for a rejected applicant to know why they were turned down. This lack of transparency is the defining characteristic of the current era of automation. It is often called the black box problem.

The technical reality is that these models are trained on the open internet, which is a repository of both human knowledge and human prejudice. Developers try to filter this data, but the scale makes perfect curation impossible. When we talk about AI ethics, we are really talking about how we handle the errors that these systems inevitably produce. There is a growing tension between the speed of deployment and the need for safety. Many companies feel pressured to release products before they are fully understood to avoid losing market share. This creates a situation where the public becomes a group of involuntary test subjects for unproven software. The legal system is struggling to keep up with the pace of change as courts debate whether a software developer can be held liable for the hallucinations of their creation.

The New Global Digital Divide

The impact of these systems is not distributed equally across the globe. While the headquarters of the major AI firms are located in a few wealthy nations, the consequences of their work are felt everywhere. There is a new form of labor exploitation emerging in the Global South. Thousands of workers in countries like Kenya and the Philippines are paid low wages to label data and filter out traumatic content. These workers are the invisible safety net that prevents AI from outputting toxic material, yet they rarely share in the profits of the industry. This creates a power imbalance where wealthy nations control the tools while developing nations provide the raw labor and data needed to sustain them.

Cultural dominance is another significant concern for the international community. Most large models are trained primarily on English language data and Western cultural norms. This means the systems often fail to understand local context or languages with fewer digital resources. When these tools are exported, they risk overwriting local knowledge with a homogenized Western perspective. This is not just a technical flaw but a threat to cultural diversity. Governments are beginning to realize that relying on foreign AI infrastructure creates a new kind of dependency. If a country does not have its own sovereign AI capabilities, it must follow the rules and values of the companies that provide the service. The global community is currently grappling with several critical issues:

- The concentration of computing power in a handful of private corporations.

- The environmental cost of training massive models in regions with water scarcity.

- The erosion of local languages in digital spaces dominated by English-centric models.

- The lack of international agreements on the use of autonomous systems in warfare.

- The potential for automated misinformation to destabilize democratic elections.

Living with the Algorithm

Consider a day in the life of Sarah, a mid-level manager at a logistics firm in . Her morning begins with an AI-generated summary of her emails. The system highlights what it thinks are the most urgent tasks, but it misses a subtle complaint from a long-time client because teh sentiment analysis tool did not recognize the sarcasm. Later, she uses a generative tool to draft a performance review for an employee. The software suggests a lower rating based on productivity metrics that do not account for the time the employee spent mentoring new hires. Sarah must decide whether to trust her own judgment or the data-driven recommendation of the machine. If she ignores the AI and the employee later fails, she might be blamed for not following the data. This is the quiet pressure of algorithmic management.

In the afternoon, Sarah applies for a new insurance policy. The insurance company uses an automated system to scan her social media and health records. The system flags her as a high risk because she recently joined a hiking group, which the algorithm associates with potential injury. There is no human to talk to and no way to explain that she is an experienced hiker with a clean bill of health. Her premium increases instantly. This is a real-world consequence of a system that prioritizes efficiency over individual nuance. By the evening, Sarah is browsing a news site where half the articles were written by bots. She finds it increasingly difficult to tell what is a reported fact and what is a synthesized summary designed to keep her clicking. This constant exposure to automated content changes how she perceives reality.

BotNews.today uses AI tools to research, write, edit, and translate content. Our team reviews and supervises the process to keep the information useful, clear, and reliable.

The Price of Efficiency

We must ask difficult questions about the hidden costs of our current trajectory. If an AI system saves a company millions of dollars but results in the loss of a thousand jobs, who is responsible for the social cost? We often treat technological progress as an inevitable force of nature, but it is the result of specific choices made by individuals with specific incentives. Why do we prioritize the optimization of profit over the stability of the labor market? There is also the question of data privacy in an era where every interaction is a training point. When you use a free AI assistant, you are not the customer; you are the product. Your conversations and preferences are used to refine a model that will eventually be sold back to you or your employer. What happens to the concept of private thought when our digital assistants are constantly listening and learning?

The environmental impact is another cost that is rarely discussed in marketing materials. Training a single large model can consume as much electricity as hundreds of homes use in a year. The cooling requirements for data centers are placing a strain on local water supplies in arid regions. Are we willing to trade ecological stability for a slightly better chatbot? We must also consider the long-term impact on human cognition. If we outsource our writing, our coding, and our critical thinking to machines, what happens to those skills in the human population? We might be building a world that is highly efficient but populated by people who can no longer function without a digital crutch. These are not technical problems to be solved with more data. They are fundamental questions about what kind of future we want to inhabit.

Have an AI story, tool, trend, or question you think we should cover? Send us your article idea — we’d love to hear it.The Infrastructure of Influence

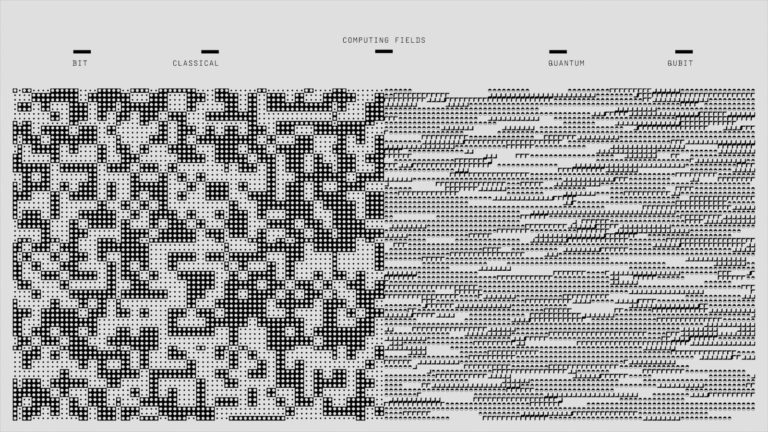

For the power users and developers, the ethical questions are embedded in the technical specifications. The shift toward local storage and edge computing is partly a response to privacy concerns. By running models locally, users can avoid sending sensitive data to a central server. However, this creates a new set of challenges regarding hardware requirements and API limits. Most high-performing models require significant VRAM and specialized chips that are currently in short supply. This creates a bottleneck where only those with the latest hardware can access the most capable tools. Developers are also struggling with the limitations of current architectures. While transformer models have been dominant, they are notoriously difficult to inspect. We can see the weights and the architecture, but we cannot easily explain why a specific input leads to a specific output.

The integration of AI into professional workflows is also hitting a wall of data poisoning and model collapse. If the internet becomes saturated with AI-generated content, future models will be trained on the output of their predecessors. This leads to a degradation of quality and an amplification of errors. To combat this, some developers are looking into verifiable data sources and watermarking techniques. There is also a push for more transparent AI ethics analysis to help users understand the risks. The technical community is currently focused on several key areas of development:

- The implementation of differential privacy to protect individual data points in training sets.

- The development of smaller, more efficient models that can run on consumer hardware.

- The creation of standardized benchmarks for detecting bias and factual errors.

- The use of federated learning to train models across multiple decentralized devices.

- The exploration of new architectures that offer better interpretability than standard neural networks.

The Unresolved Path Forward

The rapid evolution of artificial intelligence has outpaced our ability to govern it. We are currently in a standoff between the desire for innovation and the need for protection. The biggest ethical questions are not about the capabilities of the machines but about the intentions of the people who control them. As we move into , the focus will likely shift from the models themselves to the data supply chain and the accountability of the developers. We are left with a live question that will define the next decade. Can we build a system that is both powerful enough to solve our problems and transparent enough to be trusted? The answer is not yet written in code. It will be decided in courtrooms, boardrooms, and the everyday choices of users who must decide how much of their autonomy they are willing to trade for convenience.

Editor’s note: We created this site as a multilingual AI news and guides hub for people who are not computer geeks, but still want to understand artificial intelligence, use it with more confidence, and follow the future that is already arriving.

Found an error or something that needs to be corrected? Let us know.