The 10 AI Storylines That Could Define 2026

The honeymoon period for generative tools is ending. By , the focus will shift from the novelty of chat interfaces to the underlying infrastructure that supports them. We are entering an era where the primary concern is not what the software can say, but how it is powered, who owns the weights, and where the data resides. The industry is moving toward a structural shift in how information is processed and distributed across the globe. This is no longer about experimental bots. It is about the integration of machine intelligence into the core plumbing of the internet and the physical power grid. Investors and users are beginning to look past the initial excitement to see the rising costs of operation and the limits of current hardware. The storylines that will dominate the coming months are those that address these fundamental constraints. We are seeing a move away from centralized cloud dominance toward a more fragmented and specialized environment. The winners will be those who can manage the massive energy requirements and the increasingly complex legal environment surrounding training data.

The Structural Shift in Machine Intelligence

The first major storyline involves the concentration of model power. A small group of companies currently controls the most advanced frontier models. This creates a bottleneck for innovation as smaller players must build on top of these proprietary systems. However, we are seeing a push for open weight models that allow organizations to run high performance systems on their own hardware. This tension between closed and open systems will reach a breaking point as companies decide whether to pay high subscription fees or invest in their own infrastructure. At the same time, the hardware market is diversifying. While one company has dominated the chip market for years, competitors and internal silicon projects from major cloud providers are starting to provide alternatives. This shift in the supply chain is essential for reducing the cost of inference and making large scale deployment sustainable for the average business.

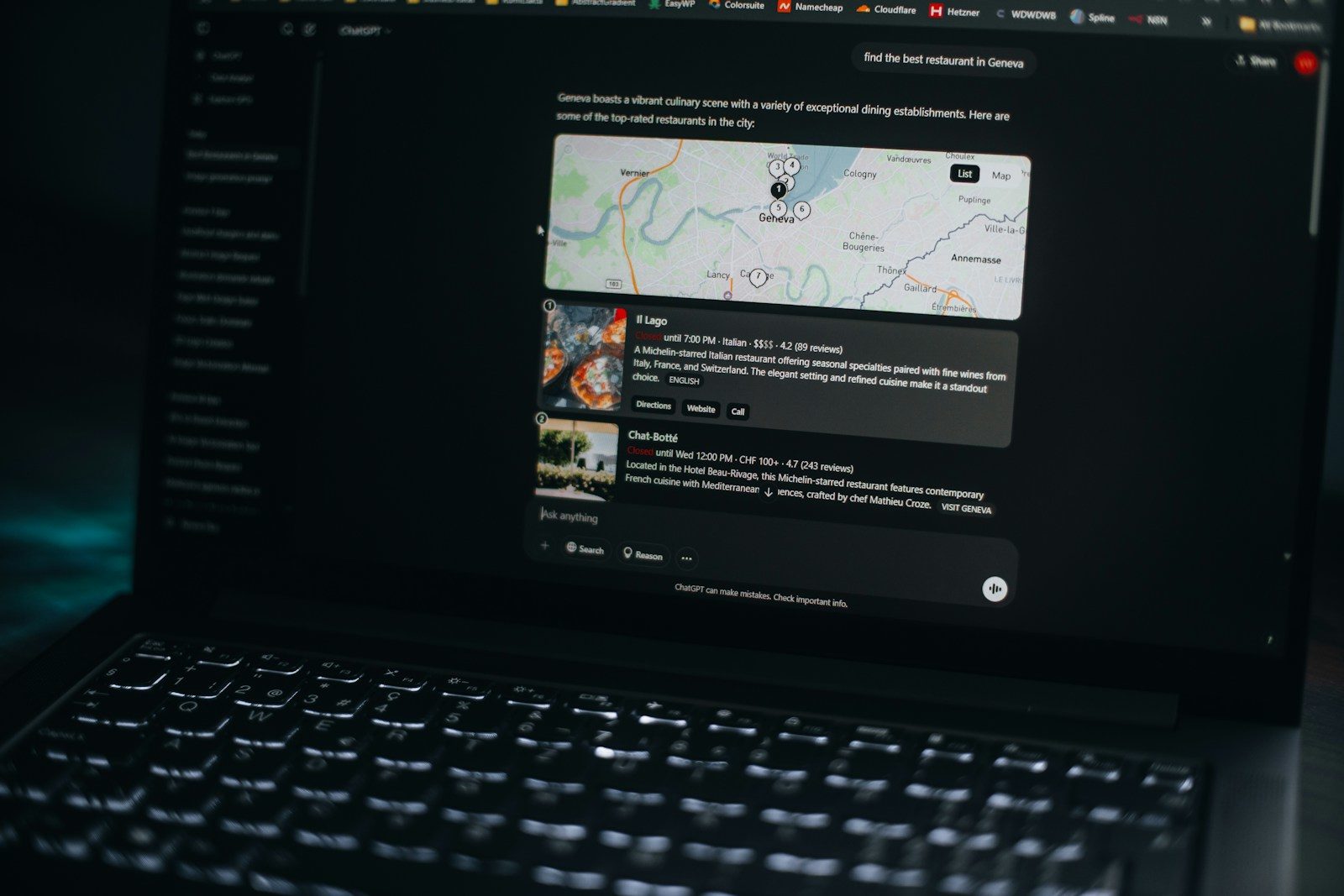

Another critical development is the disruption of search. For decades, the search bar was the entry point to the internet. Now, direct answer engines are replacing the traditional list of links. This changes the economics of the web. If a user gets a complete answer from an AI, they have no reason to click through to a source website. This creates a crisis for publishers and content creators who rely on traffic for revenue. We are also seeing a rise in local AI execution. Instead of sending every query to a remote server, new processors in laptops and phones allow for private, fast, and offline processing. This movement toward the edge is driven by both a need for lower latency and a growing demand for data privacy. Organizations are realizing that sending sensitive corporate data to a third party cloud is a significant risk that must be mitigated through local hardware solutions.

The Global Impact of Automated Systems

The influence of these technologies extends far beyond the tech sector. Governments are now treating AI capabilities as a matter of national security. This has led to a race for silicon sovereignty, where nations invest billions to ensure they have domestic chip production. We are seeing strict export controls and trade blocks designed to prevent rivals from accessing the most advanced hardware. This geopolitical tension is mirrored in the regulatory space. The European Union and various United States agencies are drafting rules to govern how models are trained and deployed. These regulations focus on transparency, bias, and the potential for misuse in critical sectors like finance and healthcare. The goal is to create a framework that allows for growth while preventing the most dangerous outcomes of automated decision making.

Energy pressure is the silent crisis of the industry. The demand for electricity from data centers is projected to grow at an unprecedented rate. This is forcing tech companies to become energy providers, investing in nuclear power and massive solar farms to keep their servers running. In some regions, the grid cannot keep up with the demand, leading to delays in data center construction. This creates a geographic shift in where tech is built, favoring areas with cheap and abundant power. Furthermore, the use of automated systems in military contexts is accelerating. From autonomous drones to strategic analysis tools, the integration of machine intelligence into defense systems is changing the nature of conflict. This raises urgent ethical questions about the role of human oversight in lethal decisions and the potential for rapid escalation in automated warfare scenarios.

Real World Integration and Daily Life

In a typical day by , a professional might start their morning by reviewing a summary of overnight communications generated by a local model on their phone. This happens without any data leaving the device, ensuring that private schedules and client names remain secure. During a meeting, a specialized agent might listen to the conversation and cross reference the discussion with internal company databases in real time. This agent does not just transcribe. It identifies contradictions in project timelines and suggests solutions based on previous successful workflows. This is the reality of the agentic shift, where software moves from being a passive assistant to an active participant in the work procces.

BotNews.today uses AI tools to research, write, edit, and translate content. Our team reviews and supervises the process to keep the information useful, clear, and reliable.

The impact on media and information is equally profound. Deepfakes have moved beyond simple face swaps to high fidelity video and audio that is nearly impossible to distinguish from reality. This has led to a crisis of trust in digital content. To counter this, we are seeing the adoption of cryptographic signatures for authentic media. Every photo or video taken on a smartphone may soon carry a digital watermark that proves its origin. This battle for authenticity is a major storyline for anyone involved in journalism, politics, or entertainment. Consumers are becoming more skeptical of what they see online, leading to a resurgence in the value of trusted brands and verified sources. The cost of verifying information is rising, and those who can provide certainty in an era of synthetic media will hold significant power.

Have an AI story, tool, trend, or question you think we should cover? Send us your article idea — we’d love to hear it.We must also consider the impact on the labor market. While some jobs are being displaced, others are being transformed. The most significant movement is in the middle management layer, where AI can handle scheduling, reporting, and basic performance tracking. This forces a re-evaluation of what human leadership looks like. The value is shifting toward emotional intelligence, complex problem solving, and ethical judgment. Workers are being asked to oversee fleets of digital agents, requiring a new set of technical and managerial skills. This change is happening faster than educational systems can adapt, creating a talent gap that companies are trying to fill with internal training programs. The divide between those who can effectively use these tools and those who cannot is widening, leading to new forms of economic inequality that governments are only beginning to address.

Socratic Skepticism and the Hidden Costs

We must ask what the true cost of this rapid adoption is. If we rely on three or four major companies for our cognitive infrastructure, what happens when their interests diverge from the public good? The centralization of intelligence is a risk that few are discussing in depth. We are trading local control for cloud based convenience, but the price of that convenience is a total loss of privacy and a dependency on subscription models that can change at any time. There is also the question of the data itself. Most models are trained on the collective output of human culture. Is it ethical for a corporation to capture that value and sell it back to us without compensation for the original creators? The current legal battles over copyright are just the beginning of a much larger conversation about the ownership of information.

There is a tendency to overestimate the near term capabilities of these systems while underestimating their long term structural impact. People expect a general intelligence that can solve any problem, but what we are getting is a series of highly efficient, narrow tools that are integrated into our existing software. The danger is not a rogue machine, but a poorly understood algorithm making decisions about credit scores, job applications, or medical treatments. We are building a world where the logic of the machine is often opaque to the humans who use it. How do we hold a system accountable if we cannot explain why it reached a specific conclusion? These are not just technical problems. They are fundamental questions about how we want our society to function. We must decide if the efficiency gains are worth the loss of transparency and human agency.

The Power User Section

For those building and managing these systems, the focus has shifted to workflow integration and local optimization. The era of just calling a massive API is being replaced by sophisticated orchestration layers. Power users are now looking at the following technical constraints:

- API rate limits and the cost of token windows for long context models.

- The use of quantization to run large models on consumer grade hardware without significant loss in accuracy.

- The implementation of Retrieval Augmented Generation to ensure models have access to the latest internal data.

- The management of local vector databases for fast and private information retrieval.

Workflow automation is no longer about simple triggers. It involves chaining multiple models together, where a small, fast model handles initial routing and a larger, more capable model handles the complex reasoning. This tiered approach is necessary to manage costs and latency. We are also seeing a move toward specialized hardware like NPUs (Neural Processing Units) becoming standard in all new computing devices. This allows for persistent, low power AI features that run in the background of the operating system. For developers, the challenge is no longer just writing code, but managing the lifecycle of the data used to fine tune these systems. The 20 percent of users who understand these underlying mechanics will be the ones who define the next generation of software architecture.

- NVMe storage speeds are becoming a bottleneck for loading large model weights into memory.

- Memory bandwidth is more important than raw compute power for many inference tasks.

- The rise of small language models (SLMs) that perform as well as older large models on specific tasks.

Editor’s note: We created this site as a multilingual AI news and guides hub for people who are not computer geeks, but still want to understand artificial intelligence, use it with more confidence, and follow the future that is already arriving.

The Bottom Line

The next two years will be defined by a move toward pragmatism. The industry is moving away from the “move fast and break things” mentality toward a more disciplined approach to building reliable, scalable, and ethical systems. We are seeing the emergence of a new stack where local hardware, specialized models, and strict regulatory compliance are the norm. The storylines that matter are not about the latest chatbot demo, but about the hard work of integrating these tools into the physical and legal structures of our world. Success will not be measured by the complexity of the model, but by the utility and safety it provides to the end user. The transition from hype to utility is well underway, and the results will be more subtle and more pervasive than many expect.

Found an error or something that needs to be corrected? Let us know.