Why Small Model Improvements Keep Creating Big Shifts

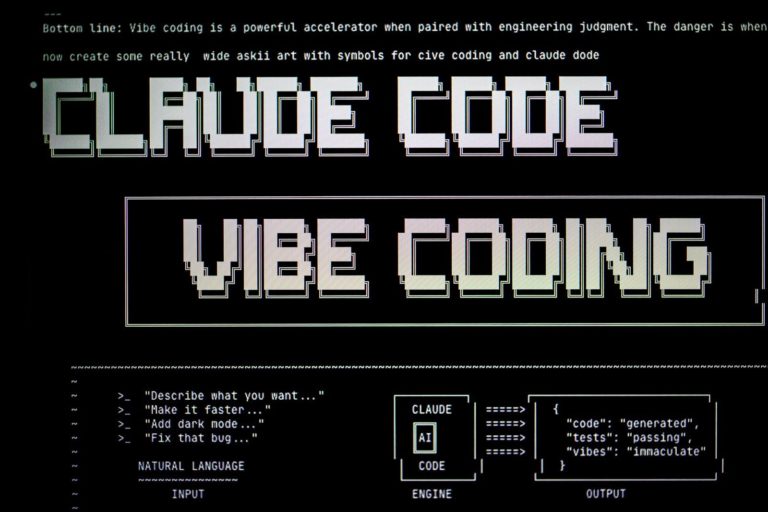

The race to build the largest possible artificial intelligence model is hitting a wall of diminishing returns. While the headline news often focuses on massive systems with trillions of parameters, the real progress is happening in the margins. Small improvements in how these models process data are creating massive shifts in what software can actually do on a daily basis. We are moving away from a period where raw scale was the only metric that mattered. Today, the focus is on how much intelligence we can squeeze into a smaller footprint. This shift makes technology more accessible and faster for everyone. It is not about building a bigger brain anymore. It is about making the existing brains work with far more efficiency. When a model becomes ten percent smaller but retains its accuracy, it does not just save money on server costs. It enables a whole new category of applications that were previously impossible due to hardware constraints. This transition is the most important trend in the tech sector right now because it moves the power of advanced computation from massive data centers into the palm of your hand.

The End of the Bigger is Better Era

To understand why these minor tweaks matter, we have to look at what they actually are. Most of the progress comes from three areas: data curation, quantization, and architectural refinements. For a long time, researchers believed that more data was always better. They scraped the entire internet and fed it into machines. Now, we know that high quality data is far more valuable than sheer volume. By cleaning datasets and removing redundant information, engineers can train smaller models that outperform their larger predecessors. This is often called textbook quality data. Another major factor is quantization. This is the process of reducing the precision of the numbers a model uses to make its calculations. Instead of using high precision decimals, a model might use simple integers. This sounds like it would ruin the results, but clever math allows the model to stay nearly as smart while requiring a fraction of the memory. You can read more about these technical shifts in recent research on QLoRA and model compression.

Finally, there are architectural changes like attention mechanisms that focus on the most relevant parts of a sentence. These are not massive overhauls. They are subtle adjustments to the math that allow the system to ignore noise. When you combine these factors, you get a model that fits on a standard laptop instead of requiring a room full of specialized chips. People often overestimate the need for massive models for simple tasks. They underestimate how much logic can be packed into a few billion parameters. We are seeing a trend where good enough is becoming the standard for most consumer products. This allows developers to integrate smart features into apps without charging a subscription fee to cover high cloud costs. It is a fundamental change in how software is built and distributed.

Why Local Intelligence Matters More Than Cloud Power

The global impact of these small improvements is hard to overstate. Most of the world does not have access to the high speed internet required to interact with massive cloud based models. When intelligence requires a constant connection to a server in Virginia or Dublin, it remains a luxury for the wealthy. Small model improvements change this by allowing the software to run locally on mid range hardware. This means a student in a rural area or a worker in an emerging market can access the same level of assistance as someone in a tech hub. It levels the playing field in a way that raw scaling never could. The cost of intelligence is dropping toward zero. This is particularly important for privacy and security. When data does not have to leave a device, the risk of a breach is significantly lower. Governments and healthcare providers are looking at these efficient models as a way to provide services without compromising citizen data.

The shift also impacts the environment. Large scale training runs consume vast amounts of electricity and water for cooling. By focusing on efficiency, the industry can reduce its carbon footprint while still delivering better products. Scientific journals like Nature have highlighted how efficient AI could reduce the environmental toll of the industry. Here are a few ways this global shift is manifesting:

- Local translation services that work without any internet connection.

- Medical diagnostic tools that run on portable tablets in remote clinics.

- Educational software that adapts to a student’s needs on low cost hardware.

- Real time privacy filtering for video calls that happens entirely on the device.

- Automated crop monitoring for farmers using inexpensive drones and local processing.

This is not just about making things faster. It is about making them universal. When the hardware requirements drop, the potential user base grows by billions of people. This trend is closely linked to the latest trends in AI development which prioritize accessibility over raw power.

A Tuesday with an Offline Assistant

Consider a day in the life of a field engineer named Marcus. He works on offshore wind turbines where internet access is non existent. In the past, if Marcus encountered a mechanical fault he did not recognize, he had to take photos, wait until he returned to shore, and consult a manual or a senior colleague. This could delay repairs by days. Now, he carries a ruggedized tablet with a highly optimized local model. He points the camera at the turbine components and the model identifies the issue in real time. It provides a step by step repair guide based on the specific serial number of the machine. The model Marcus uses is not a trillion parameter giant. It is a small, specialized version that was refined to understand mechanical engineering. This is a concrete example of how a small improvement in model efficiency creates a massive change in productivity.

Later that day, Marcus uses the same device to translate a technical document from a foreign supplier. The translation is near perfect because the model was trained on a small but high quality set of engineering texts. He never had to upload a single file to the cloud. This reliability is what makes the technology useful in the real world. Many people assume that AI must be a generalist to be helpful, but Marcus proves that specialized, small systems are often superior for professional tasks. The small nature of the model is actually a feature, not a bug. It means the system is faster, more private, and cheaper to operate. Marcus recieved his latest update last week, and the difference in speed was noticeable immediately.

BotNews.today uses AI tools to research, write, edit, and translate content. Our team reviews and supervises the process to keep the information useful, clear, and reliable.

The contradiction here is that while the models are getting smaller, the work they do is getting bigger. We are seeing a move away from chatting with a bot toward integrating a tool into a workflow. People tend to overestimate the importance of a model being able to write poetry. They underestimate the value of a model that can perfectly extract data from a blurry invoice or identify a hairline crack in a steel beam. These are the tasks that drive the global economy. As these small improvements continue, the line between smart software and regular software will disappear. Everything will just work better. This is the reality of the current tech environment.

Hard Questions About the Efficiency Tradeoff

However, we must apply some Socratic skepticism to this trend. If we are moving toward smaller, more optimized models, what are we leaving behind? One difficult question is whether the focus on efficiency leads to a good enough plateau. If a model is optimized to be fast, does it lose the ability to handle edge cases that a larger model might catch? We must ask if the rush to shrink models is creating a new kind of bias. If we only use high quality data to train these systems, who defines what quality is? We might accidentally filter out the voices and perspectives of marginalized groups because their data does not fit the textbook standard.

Have an AI story, tool, trend, or question you think we should cover? Send us your article idea — we’d love to hear it.There is also the question of hidden costs. While running a small model is cheap, the research and development required to shrink a large model is incredibly expensive. Are we just shifting the energy consumption from the inference phase to the training and optimization phase? Also, as these models become more common on personal devices, what happens to our privacy? Even if the model runs locally, the metadata about how we use it could still be harvested. We need to ask if the convenience of local intelligence is worth the potential for more invasive tracking. If every app on your phone has its own small brain, who is monitoring what those brains are learning about you? We also have to consider the longevity of hardware. If software keeps getting more efficient, will companies still push us to upgrade our devices every ? Or will this lead to a sustainable era where a five year old phone is still perfectly capable of running the latest tools? These are the contradictions we must face as the technology evolves.

The Engineering Behind the Compression

For the power users and developers, the shift to smaller models is a matter of technical specifics. The most important metric is no longer just the parameter count. It is the bits per parameter. We are seeing a move from 16 bit floating point weights to 8 bit and even 4 bit quantization. This allows a model that would normally require 40 gigabytes of VRAM to fit into less than 10 gigabytes. This is a massive shift for local storage and GPU requirements. Developers are now looking at LoRA, or Low-Rank Adaptation, to fine tune these models on specific tasks without retraining the entire system. This makes workflow integrations much easier. You can find technical documentation on these methods at MIT Technology Review.

When building applications, you have to consider the following technical limits:

- Memory bandwidth is often a bigger bottleneck than raw compute power for local inference.

- API limits for cloud models are becoming less relevant as local hosting becomes viable for production.

- Context window management is still a challenge for smaller models as they tend to lose track of long conversations faster.

- The choice between FP8 and INT4 precision can significantly impact the hallucination rate in creative tasks.

- Local storage requirements are shrinking but the need for high speed NVMe drives remains for fast model loading.

We are also seeing the rise of speculative decoding, where a tiny model predicts the next few tokens and a larger model verifies them. This hybrid approach offers the speed of a small model with the accuracy of a giant. It is a clever way to bypass the traditional trade offs of model size. For anyone looking to stay ahead in this field, understanding these compression techniques is more important than knowing how to build a model from scratch. The future belongs to the optimizers who can do more with less. The focus is shifting from raw power to clever engineering.

The Moving Target of Optimal Performance

The bottom line is that the era of bigger is always better is coming to an end. The most significant advancements are no longer about adding more layers or more data. They are about refinement, efficiency, and accessibility. We are seeing a shift that will make advanced computation as common as a calculator. This progress is not just a technical achievement. It is a social one. It brings the power of the most advanced research to everyone, regardless of their hardware or internet connection. It is the democratization of intelligence through the back door of optimization.

Editor’s note: We created this site as a multilingual AI news and guides hub for people who are not computer geeks, but still want to understand artificial intelligence, use it with more confidence, and follow the future that is already arriving.

Found an error or something that needs to be corrected? Let us know.As we look toward the next , the open question remains: will we continue to find ways to shrink intelligence, or will we eventually hit a physical limit that forces us back to the cloud? For now, the trend is clear. Small is the new big. The systems we use tomorrow will be defined not by how much they know, but by how well they use what they have.