Why Open Models Matter Even If You Never Download One

The Invisible Guardrail of Modern Computing

Open models are the silent infrastructure of the modern world. Even if you never download a file from Hugging Face or run a local server, these models dictate the price you pay for proprietary services and the speed at which new features arrive. They act as a competitive floor. Without them, a handful of companies would hold a total monopoly on the most important technology of the century. Open models provide a baseline of capability that forces the big players to keep innovating and keep their pricing models somewhat reasonable. This is not just a hobby for enthusiasts or a niche for researchers. It is a fundamental shift in how power is distributed in the tech industry. When a model like Llama is released, it sets a new standard for what is possible on consumer hardware. This pressure ensures that the closed models you use every day stay sharp and affordable. Understanding the nuances of this openness is the first step in seeing where the industry is headed.

Decoding the Marketing Speak of Openness

There is a lot of confusion about what open actually means in this context. True open source software allows anyone to see the code, modify it, and distribute it. In the world of large language models, this definition gets messy. Most models that people call open source are actually open weight models. This means the company has released the final trained parameters of the model, but they have not released the massive datasets used to train it or the specific cleaning scripts used to process that data. Without the data, you cannot truly replicate the model from scratch. You only have the finished product. Then there are permissive licenses. Some companies use custom licenses that look open but have restrictions on commercial use or specific clauses that prevent competitors from using the model. For example, a model might be free for individuals but require a paid license if your company has more than 700 million monthly active users. This is a far cry from the traditional GPL or MIT licenses that built the internet. We also see marketing language that uses words like open to describe an API that is publicly accessible but entirely controlled by a single company. This is not open at all. It is just a product with a public entrance. Genuinely open models allow you to download the files and run them on your own hardware without an internet connection. This distinction is vital because it determines who holds the ultimate kill switch. If you rely on an API, the provider can change the rules or shut you down at any moment. If you have the weights on your hard drive, you own the capability.

Why Nations are Betting on Public Weights

The global impact of these models is hard to overstate. For many countries, relying on a few US based companies for their entire AI infrastructure is a significant risk to national digital sovereignty. Governments in Europe and Asia are increasingly looking toward open models to build their own localized versions of AI. This allows them to ensure the models reflect their cultural values and linguistic nuances rather than just those of Silicon Valley. It also keeps data within their borders, which is a major concern for privacy and security. Small and medium enterprises benefit from this as well. They can build specialized tools without the fear that their core technology will be pulled out from under them. Open models also lower the barrier to entry for developers in emerging markets. Someone in Lagos or Jakarta can access the same state of the art technology as someone in San Francisco, provided they have the hardware to run it. This levels the playing field in a way that proprietary APIs never can. The existence of these models also creates a massive ecosystem of secondary tools. Developers create ways to make the models run faster or use less memory. This collective innovation moves much faster than any single company can. It creates a feedback loop where open improvements eventually find their way back into the proprietary models we all use in .

A Day Without the Cloud

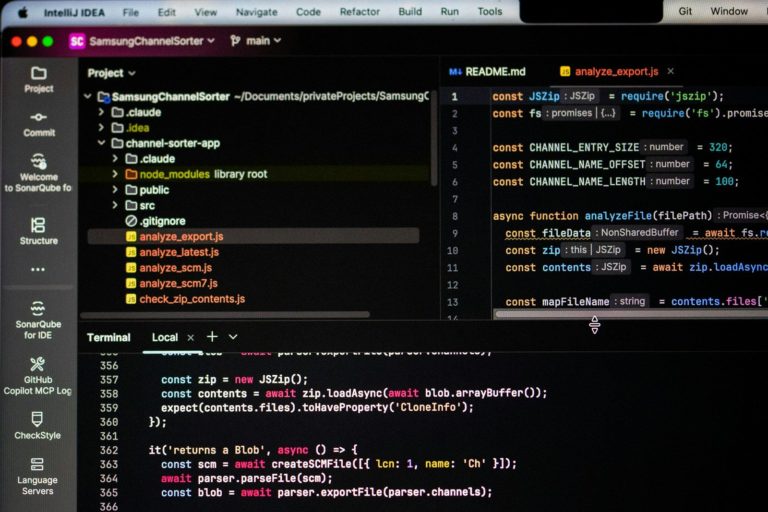

Let us look at how this plays out in a typical day for a software developer named Sarah. Sarah works for a medical startup that handles sensitive patient data. Her company cannot use cloud based AI because the risk of a data breach is too high and the regulatory hurdles are too steep. Instead, Sarah uses an open weight model running on a secure local server. In the morning, she uses the model to help her refactor a complex piece of code. Because the model is local, she does not have to worry about her proprietary code being used to train a future version of a commercial AI. Later, she uses a fine tuned version of the model to summarize patient notes. This specific model has been trained on medical terminology, making it more accurate for her needs than a general purpose model. During her lunch break, Sarah reads a blog post on AI industry analysis about the latest trends in local inference. She realizes she can optimize her workflow further. In the afternoon, she experiments with a new quantization technique that allows her to run a larger model on her existing hardware. This is the beauty of the open ecosystem. She is not waiting for a big tech company to release a new feature. She can implement it herself using tools created by the community. By the end of teh day, she has improved the accuracy of her summary tool by fifteen percent. This scenario is becoming common across many industries. From legal firms to creative agencies, people are finding that the control and privacy offered by open models are worth the extra effort of setting them up. They are building tools that are tailored to their specific needs rather than trying to fit their problems into the box of a generic AI assistant. This shift is also visible in the education sector. Universities are using open models to teach students how AI works under the hood. They can inspect the weights and experiment with different training techniques. This creates a more informed and capable workforce for the future. The ability to run these systems offline also means that researchers in remote areas can continue their work without a stable internet connection.

The High Price of Free Software

While the benefits are clear, we must ask difficult questions about the true cost of this openness. Who is actually paying for the massive compute power required to train these models? If a company like Meta spends hundreds of millions of dollars to train a model and then gives the weights away, what is their long term play? Is this a way to kill off smaller competitors who cannot afford to give their products away for free? We also have to consider the safety risks. If a model is truly open, it means the safety guardrails can be removed. This could allow bad actors to use the technology for malicious purposes like creating deepfakes or generating harmful code. How do we balance the need for open innovation with the need for public safety?

BotNews.today uses AI tools to research, write, edit, and translate content. Our team reviews and supervises the process to keep the information useful, clear, and reliable.

Under the Hood of Local Inference

For those looking to integrate these models into their professional workflows, the technical details matter. The most common way to run these models locally is through specialized frameworks. These tools use quantization to reduce the size of the models, allowing them to fit into the VRAM of consumer GPUs. For instance, a model that originally requires 40GB of memory can be compressed to 8GB with minimal loss in quality. This is done by changing the precision of the weights from 16 bit to 4 bit or even lower. When it comes to APIs, many open models are available through providers like Hugging Face or Together AI. These services offer much higher rate limits than proprietary providers, making them ideal for high volume applications. However, the real power comes from local storage and fine tuning. By using techniques like LoRA, you can train a model on your own data in a few hours on a single GPU. This creates a highly specialized tool that outperforms much larger models on specific tasks. You also need to consider the context window. Many open models now support context windows of 32k or even 128k tokens, allowing you to process entire documents at once. The integration of these models into existing software is getting easier thanks to standardized APIs. This means you can often switch from a closed model to an open one by changing a single line of code in your application. In , we expect these tools to become even more accessible to the average developer.

- Llama.cpp for cross platform CPU and GPU inference

- Ollama for simplified local model management

The Final Verdict on Choice

The choice between open and closed models is not a binary one. Most people will continue to use a mix of both. Closed models from companies like Meta AI or others offer convenience, polish, and state of the art performance for general tasks. Open models offer control, privacy, and the ability to specialize. Even if you never download a model yourself, the fact that others can is what keeps the entire industry honest. It ensures that AI remains a tool for everyone rather than a guarded secret for a few. The competition driven by the open community is the most powerful force for good in the tech world today. It forces transparency and democratizes access to the most powerful tools ever created.

Have an AI story, tool, trend, or question you think we should cover? Send us your article idea — we’d love to hear it.Editor’s note: We created this site as a multilingual AI news and guides hub for people who are not computer geeks, but still want to understand artificial intelligence, use it with more confidence, and follow the future that is already arriving.

Found an error or something that needs to be corrected? Let us know.