Why Video AI Is Becoming One of the Fastest-Moving Categories

Have you ever watched a video of a golden retriever wearing sunglasses and riding a surfboard, only to realize a few seconds later that the dog does not actually exist? It is an incredible time to be alive because the world of moving images is changing faster than a toddler on a sugar rush. We are seeing a massive shift in how stories are told, where anyone with a bright idea and a laptop can create cinema-quality clips in seconds. This is not just about making funny memes for your group chat, though that is a huge plus. It is about a fundamental change in how we communicate and share our visions with the world. The core takeaway here is that video creation is no longer a high-priced club for people with expensive cameras and giant editing suites. It is becoming a universal language that is open to everyone, making the process of going from an idea to a finished film almost instantaneous. This year, , we are seeing the bar for entry drop so low that the only limit left is your own imagination.

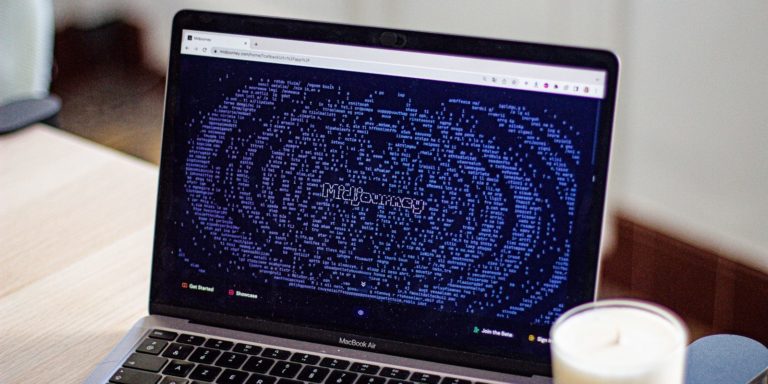

The magic starts with how these tools actually work, and it is a bit like having a digital chef who has tasted every meal ever cooked. Imagine if you could describe a dream to a friend and they could instantly paint it for you, but instead of a still painting, it is a living, breathing scene with light, shadow, and movement. Traditional video is made by capturing light through a lens, but this new wave of tech builds images from scratch based on patterns it has learned from millions of other videos. It understands that when a person walks, their hair should bounce, and when the sun sets, the shadows should stretch across the ground. It is not just copying and pasting pieces of existing footage. Instead, it is generating brand new pixels that have never existed before. Think of it like a very advanced flipbook where the computer draws every single page based on a few words you typed into a box. While it might sound like science fiction, it is happening right now on screens all over teh world.

Found an error or something that needs to be corrected? Let us know.One of the most fascinating parts of this technology is how it handles the tiny details that make a video feel real. In the past, if you wanted to change the weather in a scene, you had to spend hours in a dark room using complicated software to mask out clouds and adjust colors. Now, you can simply tell the AI to make it a rainy day, and the software understands how raindrops should splash on the pavement and how the light should reflect off the puddles. This is what people mean when they talk about realism in synthetic media. We are moving past the days of stiff, robotic movements and entering a time where the physics of the world are being mirrored with startling accuracy. Of course, it is not always perfect. Sometimes a hand might have six fingers or a person might walk through a solid object, which is what experts call the uncanny valley. It is that slightly spooky feeling when something looks almost human but not quite right. However, the speed of improvment is so fast that these little glitches are disappearing more quickly than anyone expected.

A World of Stories Without Borders

The global impact of this shift is truly something to cheer about because it levels the playing field for creators everywhere. In the past, if a small business in a remote village wanted to create a professional advertisement, they were often held back by the massive costs of hiring a production crew and buying gear. Today, that same business can produce a high-quality commercial that looks like it cost thousands of dollars, all for the price of a basic internet subscription. This means that local stories from every corner of the globe can finally be told with the same visual polish as a big Hollywood production. It is a win for diversity and a win for creativity because we get to see perspectives that were previously hidden behind a paywall of expensive technology. This democratization of tools is a huge reason why this category is moving at such a breakneck pace. When millions of people suddenly get access to powerful tools, the amount of innovation and fresh ideas that bubble up is simply staggering.

Beyond just making things look pretty, this is also a huge win for education and accessibility. Imagine a teacher who can create a custom video lesson showing a historical event exactly as it happened, or a scientist who can visualize a complex chemical reaction to show students how molecules interact. By making video production easy and fast, we are opening up new ways to learn and share knowledge that were never possible before. This is especially important for people who learn better through visul aids than through reading long blocks of text. The ability to translate complex ideas into clear, engaging videos in real time is a superpower that is now available to anyone with a story to tell. It is also helping brands connect with their audiences in more personal ways. Instead of one generic ad for everyone, a company can create hundreds of personalized videos that speak directly to different groups of people, making the internet feel a little more human and a lot more interesting.

We should also talk about how this affects the people who work in the creative industry. While change can be a bit scary, many editors and directors are finding that these tools are like having a supercharged assistant. Instead of spending days doing boring, repetitive tasks like removing a stray power line from a shot or color grading a scene, they can use AI to handle the grunt work in seconds. This allows them to focus on the fun part of the job, which is the storytelling and the artistic vision. It is about enhancing human creativity rather than replacing it. When you look at the big picture, this is about giving people more time to be creative and less time being stuck behind a loading bar. It is a bright future where the distance between having a great idea and seeing it on a screen is shorter than ever before, and that is something we can all get excited about as we look at the latest updates on the future of artificial intelligence and its role in our lives.

Many companies are already seeing the benefits of this speed. For example, marketing teams can now test out dozens of different video concepts in a single afternoon to see which one resonates best with their audience. This kind of rapid experimentation was impossible just a few years ago. It allows for a much more dynamic and responsive way of working, where creators can pivot and adapt their message based on real-time feedback. This is a massive shift for the advertising world, where being fast and relevant is the name of the game. By using synthetic actors and generated environments, brands can avoid the logistical nightmares of travel and scheduling, allowing them to create content that is both high-quality and incredibly efficient. It is a new era of production where the physical limits of the real world no longer dictate what is possible on screen.

Moving Pictures at the Speed of Thought

To really understand how this feels, let us look at a day in the life of Sarah, a solo entrepreneur who runs a small eco-friendly clothing brand. In the old days, Sarah would have to spend weeks planning a photoshoot, hiring models, and finding the perfect location. Now, Sarah starts her morning with a cup of coffee and a laptop. She types a prompt into her favorite video AI tool, asking for a scene of a woman walking through a sun-drenched forest wearing a linen shirt. Within minutes, she has a stunning, high-definition clip that looks like it was shot by a professional cinematographer. She then uses an AI editing tool to swap the shirt color to match her new summer collection and adds a synthetic voiceover that sounds warm and inviting. By lunchtime, Sarah has a complete set of social media ads ready to go, all without ever leaving her home office. This is the reality for thousands of creators who are using these tools to build their dreams one frame at a time.

The beauty of this workflow is that it allows for a level of playfulness that was previously too expensive to attempt. Sarah can try out wild ideas, like having her clothes worn by a friendly forest spirit or showing the fabric being woven by magical golden threads. Because the cost of failure is essentially zero, she can be as bold and experimental as she wants. This leads to more unique and memorable content that stands out in a crowded feed. It is not just about saving money, it is about expanding the boundaries of what is possible. For Sarah, the AI is not a replacement for her vision, it is the brush that allows her to paint on a digital canvas. She still makes all the big decisions, from the mood of the lighting to the pacing of the edit, but the AI handles the heavy lifting of rendering and generation. It is a partnership that makes her small business feel like a global powerhouse.

Have an AI story, tool, trend, or question you think we should cover? Send us your article idea — we’d love to hear it.This same technology is also making waves in the world of big-budget filmmaking. Directors are using AI to create detailed storyboards and pre-visualizations that help them plan complex action sequences before they ever step foot on a set. This saves millions of dollars in production costs and helps the entire crew stay on the same page. Even in post-production, tools like those from Adobe Premiere are integrating AI to help editors find the best takes and sync audio automatically. We are also seeing the rise of synthetic actors who can perform stunts that would be too dangerous for humans or play roles in languages they do not actually speak. This opens up a world of possibilities for international co-productions and helps stories reach a much wider audience. The line between what is real and what is generated is blurring, but in a way that makes the movie-going experience more immersive and exciting than ever.

The Magic Behind the Moving Pixels

While we are all very excited about the possibilities, it is also natural to have some friendly questions about where all this is going. We find ourselves wondering about things like who owns the rights to an image created by an AI, or how we can make sure that people do not use these tools to create misleading content. It is a bit like when the first cameras were invented and people were worried they would steal their souls, every big leap in tech comes with a bit of a learning curve. We are currently in a phase of curious exploration where we are figuring out the best rules for this new playground. Organizations and creators are working together to build systems that protect artists while still allowing for innovation. It is an ongoing conversation that is handled with a spirit of helpfulness and a desire to make sure this tech benefits everyone. By staying curious and asking the right questions, we can ensure that the future of video is not only bright but also fair and responsible for creators across the globe.

BotNews.today uses AI tools to research, write, edit, and translate content. Our team reviews and supervises the process to keep the information useful, clear, and reliable.

Now, for my friends who love to get under the hood, let us talk about the power user side of things. The real heavy lifting in video AI happens through sophisticated workflow integrations and the use of powerful APIs. Platforms like Runway are leading the charge by offering tools that allow you to rotoscope, inpaint, and generate motion with incredible precision. One of the biggest hurdles right now is managing API limits and the massive amount of data required for high-resolution rendering. Many pro users are looking toward local storage solutions and high-end GPUs to handle the processing power needed for long-form content. We are seeing a move toward hybrid systems where the initial generation happens in the cloud, but the fine-tuning and final touches are done locally to ensure total creative control. This balance between cloud speed and local power is where the most interesting developments are happening for tech enthusiasts.

Another big topic in the geek circles is the concept of consistent character generation. In the early days, if you asked an AI to show a character in two different scenes, they would often look like two completely different people. Now, new techniques are allowing creators to lock in specific features so that a character looks the same across an entire film. This is a huge deal for storytelling because it allows for actual character arcs and narrative depth. We are also seeing improvements in how AI handles frame rates and motion blur, making the output look less like a series of still images and more like traditional cinema. For those who really want to dive deep, exploring the world of open-source models and custom training sets is the next big frontier. It allows you to teach the AI your own specific style, creating a truly unique visual signature that no one else can replicate. The level of customization available is growing every day, and it is a thrilling time to be a power user in this space.

Editor’s note: We created this site as a multilingual AI news and guides hub for people who are not computer geeks, but still want to understand artificial intelligence, use it with more confidence, and follow the future that is already arriving.

If you are looking to get started with these advanced features, here are a few things to keep in mind:

- Check your hardware requirements because rendering high-quality video still takes a lot of processing power.

- Experiment with different prompt structures to see how small changes in wording can lead to completely different visual results.

The integration of these tools into existing software is also a major trend. We are seeing plugins that allow you to use AI generation directly inside programs like After Effects or DaVinci Resolve. This means you do not have to keep switching between different apps, which makes the whole process much smoother. The goal is to create a seamless experience where the AI feels like just another tool in your kit, like a brush or a lens. As we move forward, the focus will likely shift toward even more control, allowing users to direct the AI with gestures or simple sketches. The potential for real-time interaction is huge, especially for things like live broadcasts or interactive gaming. It is a fast-moving category because every new breakthrough opens the door to ten more ideas, and the community of developers is working around the clock to push the boundaries of what is possible.

Here are some of the most common uses for these tools today:

- Creating background environments for virtual sets in film and television.

- Generating realistic stock footage for social media marketing and advertisements.

The bottom line is that we are witnessing a joyful explosion of creativity that is making the world a more colorful and connected place. Video AI is moving so fast because it solves a universal problem: the desire to share our stories in the most vivid way possible without being held back by technical or financial barriers. While there are still some bumps in the road, like the occasional six-fingered hand or a slightly weird walk, the progress we are seeing is nothing short of amazing. The future is bright, and it is being built one pixel at a time by people just like you who have a story to tell. So, grab your digital paintbrush and start creating, because the world is waiting to see what you come up with. It is an exciting journey, and we are all just getting started in this wonderful new era of moving pictures.

Have a question, suggestion, or article idea? Contact us.