What the AI Industry Is Most Worried About in Law and Regulation

The era of voluntary AI ethics is over. For years, tech giants and startups operated in a space where “principles” and “guidelines” were the only guardrails. That changed with the finalization of the European Union AI Act and a wave of lawsuits in the United States. Today, the conversation has shifted from what AI could do to what AI is legally allowed to do. Legal teams now sit in the same rooms as software engineers. This is not about abstract philosophy anymore. It is about the threat of fines that can reach seven percent of a company global annual turnover. The industry is bracing for a period where compliance is just as important as compute power. Companies are now forced to document their training data, prove their models are not biased, and accept that some applications are simply illegal. This transition from a lawless environment to a strictly regulated one is the most significant shift in the tech sector in decades.

The Shift Toward Mandatory Compliance

The core of the current regulatory movement is a risk based approach. Regulators are not trying to ban AI. They are trying to categorize it. Under teh new rules, AI systems are placed into four buckets: unacceptable risk, high risk, limited risk, and minimal risk. Systems that use biometric identification in public spaces or social scoring by governments are largely banned. These are the unacceptable risks. High risk systems are the ones that actually affect your life. This includes AI used in hiring, credit scoring, education, and law enforcement. If a company builds a tool to screen resumes, they must now meet strict transparency and accuracy standards. They cannot just claim their algorithm works. They have to prove it through rigorous documentation and third party audits. This is a massive operational burden for companies that previously kept their internal workings secret.

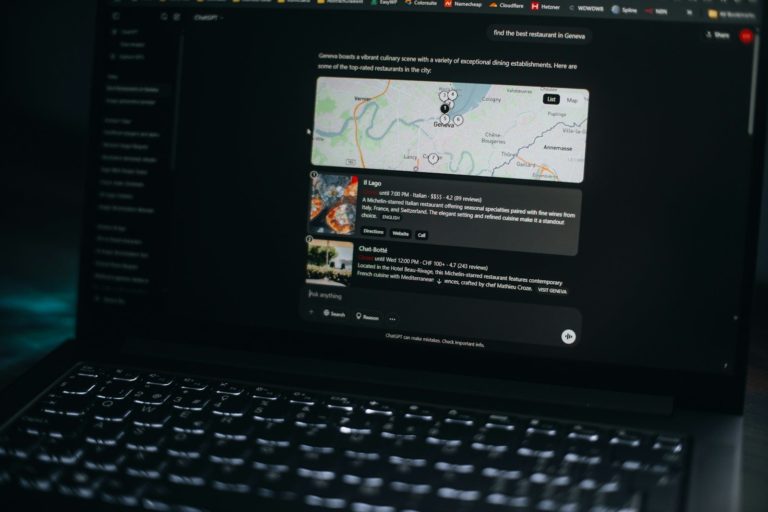

General purpose AI models, like the large language models that power chatbots, have their own set of rules. These models must disclose if their content was generated by AI. They also have to provide summaries of the copyrighted data used to train them. This is where the tension lies. Most AI companies consider their training data a trade secret. Regulators now say that transparency is a requirement for market entry. If a company cannot or will not disclose its data sources, it may find itself blocked from the European market. This is a direct challenge to the “black box” nature of modern machine learning. It forces a level of openness that the industry has resisted for years. The goal is to ensure that users know when they are interacting with a machine and that creators know if their work was used to build that machine.

The impact of these rules extends far beyond Europe. This is often called the Brussels Effect. Because it is difficult to build different versions of a software product for every country, many companies will simply apply the strictest rules globally. We saw this with data privacy laws a few years ago. Now we are seeing it with AI. In the United States, the approach is different but equally impactful. Instead of one giant law, the US is using executive orders and a flurry of high profile lawsuits to set boundaries. The US Executive Order from 2026 focused on safety testing for the most powerful models. Meanwhile, the courts are deciding if training an AI on copyrighted books and news articles is “fair use” or “theft.” These legal battles will define the economic future of the industry. If companies have to pay to license every piece of data, the cost of building AI will skyrocket.

China has also moved quickly to regulate generative AI. Their rules focus on ensuring that AI output is accurate and aligns with social values. They require companies to register their algorithms with the government. This creates a fragmented global environment. A developer in San Francisco now has to worry about the EU AI Act, US copyright law, and Chinese algorithm registration. This fragmentation is a major concern for the industry. It creates a high barrier to entry for smaller players who cannot afford a massive legal department. The fear is that only the largest tech companies will have the resources to stay compliant in every region. This could lead to a situation where a few giants control the entire market because they are the only ones who can afford the “compliance tax.”

In the real world, this looks like a fundamental change in how products are built. Imagine a product manager at a mid sized startup. A year ago, their goal was to ship a new AI feature as fast as possible. Today, their first meeting is with a compliance officer. They have to track every dataset they use. They have to test their model for “hallucinations” and bias. They have to create a “human in the loop” system to oversee the AI decisions. This adds months to the development cycle. For a creator, the impact is different. They are now looking for tools that can prove they were not trained on stolen work. We are seeing the rise of “licensed AI” where every image and sentence in the training set is accounted for. This is a move toward a more sustainable but more expensive way of building technology.

A day in the life of a compliance officer now involves “red teaming” sessions where they try to break their own AI. They look for ways the model might give dangerous advice or show prejudice. They document these failures and the fixes. This documentation is not just for internal use. It must be ready for inspection by government regulators at any time. This is a far cry from the “move fast and break things” era. Now, if you break things, you might face a lawsuit from a major news organization or a fine from a government agency. The EU AI Act has turned AI development into a regulated profession, similar to banking or medicine. You can find a comprehensive AI policy analysis that details how these rules are being applied to different sectors today. The stakes are no longer just about user experience; they are about legal survival.

The industry is also grappling with the “Copyright Trap.” Major publishers like the New York Times have sued AI companies for using their articles without permission. These cases are not just about money. They are about the right to exist. If the courts rule that AI training is not fair use, the entire business model of generative AI could collapse. Companies would have to delete their current models and start over with licensed data. This is why we see companies like OpenAI signing deals with news organizations. They are trying to get ahead of the legal risk. They are trading cash for the legal right to use data. This creates a new economy where data is the most valuable commodity.

BotNews.today uses AI tools to research, write, edit, and translate content. Our team reviews and supervises the process to keep the information useful, clear, and reliable.

Socratic skepticism suggests we should ask who these rules actually protect. Do they protect the public, or do they protect the incumbents? If the cost of compliance is millions of dollars, a two person startup in a garage cannot compete. We might be accidentally creating a monopoly for the companies that already have the money. There is also the question of privacy. To prove an AI is not biased against a certain group, a company might need to collect more data about that group. This creates a paradox where more surveillance is required to ensure “fairness.” We must also ask about the environmental cost. If regulation requires constant testing and re training of models to meet new standards, the energy consumption of these data centers will grow even faster. Are we willing to accept that trade off?

Another difficult question is the definition of “truth.” Regulators want AI to be “accurate.” But who decides what is accurate in a political or social context? If a government can fine a company for an “inaccurate” AI response, that government essentially has a tool for censorship. This is a major concern in countries with less than perfect records on human rights. The industry is worried that “safety” will become a code word for “state approved content.” We are also seeing a push for “watermarking” AI content. While this sounds good for stopping deepfakes, it is technically difficult to implement. A clever user can often strip a watermark away. If we rely on a technology that can be easily bypassed, are we creating a false sense of security? The hidden costs of these regulations are often buried in the fine print.

For the power users and developers, the geeky side of regulation is found in the technical requirements for model reporting. We are seeing the rise of model cards, which are standardized documents that list a model’s training data, performance benchmarks, and known limitations. These are becoming as common as “readme” files in GitHub repositories. Developers are also having to build “transparency APIs” that allow third party researchers to audit their systems without seeing the underlying code. This is a complex engineering challenge. How do you give someone enough access to verify your model’s safety without giving away your intellectual property? The industry is currently debating the standards for these APIs and the limits of what should be shared.

Local storage and “edge AI” are becoming more popular as a way to avoid some regulatory hurdles. If the AI processing happens on a user’s phone rather than in the cloud, it is easier to comply with strict data privacy laws. However, this limits the power of the AI. Developers are now balancing the need for massive cloud compute with the legal safety of local inference. We are also seeing the implementation of “kill switches” in AI code. These are protocols that can shut down a model if it begins to exhibit “emergent behaviors” that were not predicted during testing. This is no longer science fiction. It is a requirement for high risk systems. Compliance is being baked directly into the software architecture, from the database schema to the API rate limits.

The bottom line is that the AI industry is maturing. The transition from a research curiosity to a regulated utility is painful and expensive. Companies that ignore the legal shift will not survive the next five years. The focus has moved from “can we build it” to “should we build it” and “how do we document it.” This change will likely slow down the pace of innovation in the short term, but it may lead to more stable and trustworthy technology in the long run. The rules are still being written, and the lawsuits are still being settled. What is clear is that the “wild west” is gone. The future of AI will be defined by lawyers and lawmakers just as much as by engineers and data scientists. The industry is worried, but it is also adapting to the new reality of a regulated world.

Editor’s note: We created this site as a multilingual AI news and guides hub for people who are not computer geeks, but still want to understand artificial intelligence, use it with more confidence, and follow the future that is already arriving.

Found an error or something that needs to be corrected? Let us know.