The AI Data Centre Boom Explained Simply

The Physical Reality of the Cloud

Artificial intelligence is often discussed as a ghost in the machine. We talk about chatbots and image generators as if they exist in a void. The reality is far more industrial. Every time you ask a question to a large language model, a massive facility somewhere in the world hums with activity. These buildings are not just warehouses for servers. They are the new power plants of the information age. They consume vast amounts of electricity and require constant cooling to prevent their processors from melting. The scale is difficult to grasp for most people. We are seeing a construction surge that rivals the industrial expansion of the nineteenth century. Companies are spending billions of dollars to secure land and power before their competitors do. This is not a digital trend. It is a massive physical expansion of our built environment. The cloud is made of steel, concrete, and copper. Understanding this shift is vital for anyone who wants to know where the technology industry is headed in . It is a story of physical limits and local politics.

Concrete and Copper

A modern data centre is a specialized industrial facility designed to house thousands of high performance computers. Unlike the server rooms of the past, these buildings are now optimized for the intense heat and power demands of AI chips. The sheer size of these sites is increasing. A typical large scale facility can cover over 50,000 m2 of floor space. Inside, rows of racks hold specialized hardware like the Nvidia H100. These chips are designed to process the massive mathematical arrays required for machine learning. This process generates an incredible amount of heat. Cooling systems are no longer an afterthought. They are the primary engineering challenge. Some facilities use giant fans to move air, while newer designs use liquid cooling where pipes of chilled water run directly over the processors.

The constraints on building these sites are entirely physical. First, you need land that is close to major fiber optic lines. Second, you need a massive amount of power. A single large data centre can consume as much electricity as a small city. Third, you need water for the cooling towers. Thousands of gallons are evaporated every day to keep the temperatures stable. Finally, you need permits. Local governments are increasingly hesitant to approve these projects because they put a strain on the local grid. This is why the industry is moving away from abstract talk about software and toward hard negotiations over utility connections and zoning laws. The bottleneck for AI growth is no longer just code. It is how fast we can pour concrete and lay high voltage cables. According to the International Energy Agency, data centre electricity consumption could double by 2026. This growth is forcing a total rethink of how we build industrial infrastructure.

The New Geopolitics of Power

Data centres have become strategic national assets. In the past, countries competed over oil or manufacturing hubs. Today, they compete for compute. Having large scale AI infrastructure within your borders provides a significant advantage for national security and economic growth. This has led to a global race to build. Northern Virginia remains the largest hub in the world, but new clusters are emerging in places like Ireland, Germany, and Singapore. The location choice is driven by the stability of the power grid and the temperature of the environment. Cooler climates are preferred because they reduce the energy needed for air conditioning. However, the concentration of these facilities is creating political tension. In some regions, data centres consume more than 20 percent of the total national power supply.

This concentration makes infrastructure a matter of foreign policy. Governments are now looking at data centres as critical infrastructure that must be protected. There is also a push for data sovereignty. Many nations want the data of their citizens to be processed locally rather than in a facility across the ocean. This requirement forces tech giants to build in more locations, even where power is expensive. The global supply chain for the components is also under pressure. From the specialized transformers needed for the electrical substations to the backup diesel generators, every part of the build is seeing long lead times. This is a physical arms race. The winners will be the ones who can navigate the complex web of local regulations and energy markets. You can read more about the latest AI infrastructure trends to see how this is unfolding in real time. The map of global power is being redrawn by where the fiber meets the fence line.

Life in the Shadow of the Server

Consider a small town on the edge of a major metropolitan area. For decades, the land was used for farming or sat empty. Then, a major tech company buys hundreds of acres. Within months, massive windowless boxes begin to rise. For the residents, the impact is immediate. During the construction phase, hundreds of trucks clog teh local roads. Once the facility is operational, the noise becomes the primary concern. The giant cooling fans create a constant low frequency hum that can be heard for miles. It is a sound that never stops. For a family living nearby, the quiet of the countryside is replaced by the sound of a thousand jet engines that never take off. This is the reality of living next to the engine of the modern economy.

Local resistance is growing. In places like Arizona and Spain, residents are protesting the use of precious water supplies for cooling. They argue that in a time of drought, the water should go to people and crops, not to cooling chips that generate advertisements or write emails. Local councils are caught in the middle. On one hand, these facilities bring in massive amounts of tax revenue without requiring much in the way of schools or emergency services. On the other hand, they provide very few permanent jobs once construction is finished. A building that covers 100,000 m2 might only employ fifty people. This creates a disconnect between the economic value of the building and its benefit to the local community. The political debate is shifting from how to attract tech to how to limit its footprint.

BotNews.today uses AI tools to research, write, edit, and translate content. Our team reviews and supervises the process to keep the information useful, clear, and reliable.

Hard Questions for the Silicon Age

The rapid expansion of AI infrastructure raises several difficult questions that the industry is not yet ready to answer. First, we must ask who truly benefits from this massive consumption of resources. If a data centre uses enough electricity to power 50,000 homes, is the value of the AI it produces worth the strain on the grid? There is a hidden cost to every search query and every generated image that is currently being subsidized by the environment and local taxpayers. Second, what happens to the privacy of the data stored in these massive hubs? As we centralize more of our digital life into fewer, larger buildings, they become primary targets for both physical and cyber attacks. The concentration of data creates a single point of failure that could have catastrophic consequences.

We also need to consider the long term sustainability of this model. Many tech companies claim they are carbon neutral by buying energy offsets. However, an offset does not change the fact that the facility is pulling real power from a grid that may still rely on coal or gas. The physical demand is immediate, while the green energy projects often take years to come online. Is this a sustainable way to build a global economy? We are essentially betting that the efficiency gains from AI will eventually outweigh the massive energy cost of creating it. This is a gamble with no guarantee of success. Finally, what happens to these buildings if the AI boom cools down? We have seen previous eras of overbuilding lead to “ghost” data centres. These massive structures are difficult to repurpose for anything else. They are monuments to a specific moment in technical history. If the demand for compute drops, we will be left with giant, empty boxes that serve no purpose. We must ask if we are building for a permanent shift or a temporary spike.

The Architecture of Massive Compute

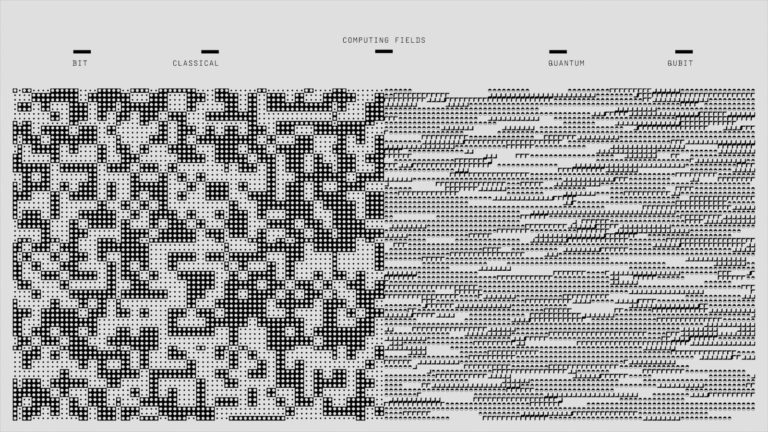

For the power users and engineers, the interest lies in the internal architecture of these sites. We are moving away from general purpose servers toward highly specialized clusters. The primary unit of the AI data centre is the pod. A pod consists of several racks of GPUs connected by high speed networking like InfiniBand. This allows the chips to work together as a single giant computer. The bandwidth requirements between these chips are staggering. If the connection is too slow, the expensive GPUs sit idle, wasting power and money. This is why the physical layout of the cables inside the building is just as important as the code running on the chips. The latency of a few meters of copper can impact the training time of a model.

Workflow integration is another major hurdle. Most companies do not own their own data centres. They rent space and compute through APIs from providers like Amazon or Microsoft. However, these providers are hitting capacity limits. We are seeing a shift where large companies are trying to move their workloads to smaller, regional providers or even building their own private clouds to ensure they have guaranteed access to hardware. Local storage is also making a comeback. While the processing happens in the cloud, the massive datasets required for training are often kept on site to avoid the cost and time of moving petabytes of data over the public internet. This creates a hybrid model where the data stays local but the compute is distributed. The technical specifications of these sites are now defined by three main factors:

- Power density per rack, which has increased from 10kW to over 100kW in some AI designs.

- Cooling efficiency, measured by Power Usage Effectiveness or PUE.

- Interconnect speed, which determines how effectively the GPUs can communicate during training.

These metrics are the new benchmarks for the industry. If you cannot get the power to the rack or the heat out of the building, the fastest chip in the world is useless. This is the reality of the geek section of the AI boom. It is an engineering challenge of the highest order.

The Final Verdict on Infrastructure

The AI data centre boom is the most significant physical expansion of the tech industry in decades. It has moved the conversation from the boardroom to the zoning board. We are no longer just talking about algorithms. We are talking about the capacity of the electrical grid and the rights to local water. This shift creates a visible contradiction. We want the benefits of advanced AI, but we are increasingly unwilling to host the infrastructure required to run it. This tension will define the next decade of technical development. The open question remains: can we find a way to build these facilities that is compatible with the needs of the communities that host them? If we cannot, the AI era may hit a physical wall before it ever reaches its full potential.

Editor’s note: We created this site as a multilingual AI news and guides hub for people who are not computer geeks, but still want to understand artificial intelligence, use it with more confidence, and follow the future that is already arriving.

Found an error or something that needs to be corrected? Let us know.