How to Start Using AI Without Feeling Lost

The era of treating artificial intelligence as a mysterious oracle is over. Most people approach these tools with a mix of anxiety and overblown expectations, often expecting a digital god that can solve every problem with a single sentence. The reality is far more mundane and useful. Modern AI is simply a new category of software that excels at pattern recognition and linguistic synthesis. To stop feeling lost, you must stop looking for magic and start looking for utility. Practicality matters more than novelty in this space. If a tool does not save you thirty minutes of tedious work or help you clarify a difficult thought, it is not worth your time. The current shift in the industry is moving away from the shock of what machines can say toward the utility of what they can do. This guide moves past the hype to show you how to integrate these systems into your daily routine without the confusion that usually follows new technology adoption.

The End of the Magic Trick

To understand why you might feel lost, you have to understand what these systems actually are. Most users bring a search engine mindset to a generative model. When you use a search engine, you are looking for a specific record in a database. When you use a model like GPT-4 or Claude, you are interacting with a probability engine. These models do not know facts in the way humans do. Instead, they predict the next most likely word in a sequence based on vast amounts of training data. This is why they can sometimes state falsehoods with absolute confidence. This phenomenon is often called hallucination, but it is actually the system working exactly as intended. It is always predicting, even when it lacks the specific data to be accurate.

The confusion usually stems from the conversational interface. Because the machine speaks like a human, we assume it thinks like one. It does not. It lacks a mental model of the world. It does not have feelings, goals, or a sense of truth. It is a highly sophisticated calculator for language. Once you accept that you are talking to a statistical mirror rather than a sentient being, the frustration of “wrong” answers begins to fade. You start to see the tool as a collaborator for drafting, summarizing, and brainstorming rather than a definitive source of truth. This distinction is the first step toward mastery. You must verify everything it produces, especially when the stakes are high. The recent changes in these models have made them faster and more coherent, but the underlying logic remains a matter of math rather than meaning. This is why human review remains the most critical part of the process. Without your oversight, the machine is just a loud, confident guesser.

A Shift in Global Productivity

The impact of this technology is not limited to Silicon Valley. It is felt in every corner of the globe where people use computers to communicate. For a small business owner in Nairobi or a student in Seoul, these tools provide a way to bridge linguistic and technical gaps that were previously insurmountable. High quality translation and coding assistance are now available to anyone with an internet connection. This is not about replacing workers but about changing the baseline of what one person can accomplish. In the past, writing a complex script or drafting a legal document required specialized training or expensive consultants. Now, those tasks can be initiated by anyone, provided they have the critical thinking skills to guide the machine.

We are seeing a massive shift in how information is processed across borders. Organizations are using these models to parse thousands of pages of international regulations or to localize marketing content in seconds. This speed has a cost, though. As more people use these tools, the amount of generic, AI generated content on the internet is increasing. This makes original, human thought more valuable than ever before. The global workforce is currently in a period of rapid adjustment where the ability to prompt a machine is becoming as fundamental as the ability to use a word processor. Those who learn to use these tools as an extension of their own expertise will find themselves at a significant advantage. The goal is to use the machine to handle the heavy lifting of structure and syntax so you can focus on strategy and nuance. This shift is happening in real time, and it is affecting every industry from healthcare to finance.

Making the Tools Work for You

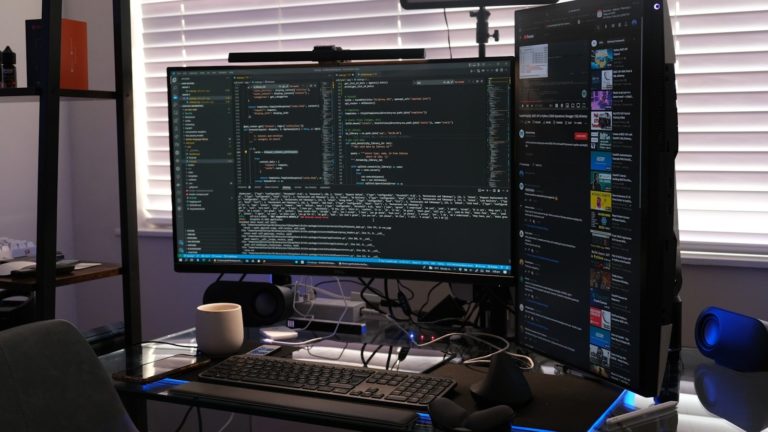

Let us look at a day in the life of someone who has integrated these tools effectively. Imagine a project manager who starts their morning with fifty unread emails. Instead of reading every single one, they use a tool to summarize the threads and identify which ones require immediate action. By ten in the morning, they have drafted three project proposals by providing the AI with raw notes and asking it to organize them into a standard format. This is where teh real value lies. It is not about the machine doing the thinking, but about the machine doing the formatting. Later in the afternoon, they might encounter a technical error in a spreadsheet. Instead of searching forums for an hour, they describe the error to the AI and receive a corrected formula in seconds. This is a concrete payoff that changes the tempo of a workday.

Consider the example of a writer struggling with a blank page. They can use a model to generate five different outlines for an article. They might hate four of them, but the fifth one might spark an idea they hadn’t considered. This is a collaborative process. The writer is still the architect, but the AI is the tireless assistant providing materials. Products like ChatGPT from OpenAI or Claude from Anthropic have made this accessible through simple chat interfaces. However, the tactic fails when you ask the machine to be the final word. If you let the AI write your entire report without checking the data, you are likely to include errors that a human would never make. The confusion readers bring is often the belief that the AI is a “set it and forget it” solution. It is not. It is a power tool that requires a steady hand and a watchful eye. You must remain the editor in chief of your own life. The machine can provide the draft, but you must provide the soul and the accuracy. This is the only way to ensure the output remains relevant and trustworthy in a professional setting.

The Hidden Costs of Efficiency

While the benefits are clear, we must apply some Socratic skepticism to the rise of these models. What are the hidden costs of this efficiency? First, there is the environmental impact. Running these massive data centers requires an immense amount of electricity and water for cooling. As we scale these tools, we must ask if the convenience of a summarized email is worth the carbon footprint. Second, there is the issue of privacy. When you feed your company’s private data into a public model, where does that data go? Most companies are still figuring out how to protect their intellectual property in an age where every prompt could potentially train the next version of the model.

BotNews.today uses AI tools to research, write, edit, and translate content. Our team reviews and supervises the process to keep the information useful, clear, and reliable.

Under the Hood for Power Users

For those who want to go beyond the chat box, the geek section offers a look at how to truly own these tools. Power users are moving away from the standard web interfaces and toward API integrations and local storage solutions. Using an API allows you to build the AI directly into your existing workflows, such as your task manager or your code editor. This bypasses the need to copy and paste text back and forth. However, you must be aware of API limits and the cost per thousand tokens. A token is roughly three quarters of a word, and costs can add up quickly if you are processing large volumes of data. Another major trend is the use of local LLMs. Tools like Ollama or LM Studio allow you to run models directly on your own hardware. This is a game changer for privacy because your data never leaves your machine. You can find more about this in various comprehensive AI guides that focus on local implementation.

Technical specifications you should know include:

- Context Window: This is the amount of text the model can “remember” at one time, usually measured in tokens. Current models range from 8k to over 200k tokens.

- Quantization: This is a process of shrinking a model so it can run on consumer hardware without losing too much intelligence.

- Temperature: A setting that controls the randomness of the output. A lower temperature makes the model more predictable, while a higher temperature makes it more creative.

- Latency: The time it takes for the model to start generating a response, which is critical for real time applications.

- Inference: The actual process of the model generating an answer based on your prompt.

- Fine-tuning: Training a pre-existing model on a smaller, specific dataset to make it an expert in a particular field.

The technical side of AI is moving toward smaller, more efficient models that can run on a phone or a laptop. This reduces the reliance on big tech infrastructure and gives the user more control. If you are serious about using AI, you should look into how to manage your own context windows and how to structure your data so the machine can find it easily. This might involve using a vector database or a RAG (Retrieval-Augmented Generation) system. These systems allow the AI to look up information in your own files before it generates an answer. This significantly reduces hallucinations and makes the tool much more reliable for professional work. You can follow the latest research on these methods at sites like MIT Technology Review to stay ahead of the curve.

The Path Forward

Starting with AI does not require a computer science degree. It requires a shift in perspective. Stop asking what the AI can do for you and start asking how you can use it to augment what you already do. The technology is not static. It is changing every month, with new models and features being released at a dizzying pace. The core principles, however, remain the same. Be specific in your requests, verify the results, and be mindful of the data you share. The most successful users are those who remain skeptical of the hype but open to the utility. As we move through and into the future, the gap between those who use AI and those who do not will only grow. The best way to not feel lost is to start small. Pick one repetitive task and see if a model can help you do it better. That is the only way to turn a complex technology into a simple tool.

Editor’s note: We created this site as a multilingual AI news and guides hub for people who are not computer geeks, but still want to understand artificial intelligence, use it with more confidence, and follow the future that is already arriving.

Found an error or something that needs to be corrected? Let us know.