10 AI Videos Worth Watching This Month

The transition from static images to fluid video marks a shift in how we perceive digital evidence. We are moving past the era where a prompt produces a single frame. Now, the industry focuses on temporal consistency and the physics of motion. These ten clips represent more than just technical milestones. They serve as a window into a future where the barrier between a captured moment and a synthesized one disappears entirely. Many viewers still treat these videos as mere novelties. They look at the warped limbs or the shimmering backgrounds and dismiss the tech as a toy. This is a mistake. The signal in these videos is not the perfection of the image but the speed of its improvement. We are seeing the raw output of models that learn the rules of our world by watching it. This month, the most important clips are not the ones that look the best. They are the ones that prove the software understands how gravity, light, and human anatomy interact over time. This is the foundation of a new visual language.

The current state of video generation relies on diffusion models that have been expanded into the third dimension of time. Instead of just predicting where a pixel should go on a flat plane, these systems predict how that pixel should change over sixty frames. This requires a massive amount of compute and a deep understanding of continuity. When you watch a clip of a person walking, the model must remember what the person looked like three seconds ago to ensure their shirt color does not change. This is called temporal coherence. It is the hardest problem in synthetic media. Most of the videos we see today are short because maintaining this coherence over long durations is computationally expensive. The models often take shortcuts. They might blur a background or simplify a complex movement to save on processing power. However, the latest batch of releases shows a significant leap in maintaining detail across the entire duration of the clip. This suggests that the underlying architectures are becoming more efficient at handling high-dimensional data.

The confusion most people bring to this topic is the idea that the AI is “editing” video. It is not. It is dreaming the video into existence from a vacuum of noise. There is no source footage being manipulated. There is only a mathematical probability that a certain sequence of pixels represents a cat jumping or a car driving. This distinction matters because it changes how we think about copyright and creativity. If there is no source material, the concept of a “remix” becomes obsolete. We are dealing with a generative process that synthesizes informaiton it has seen during training to create something entirely new. This process is becoming so fast that we are approaching real-time generation. Soon, the delay between a thought and a moving image will be measured in milliseconds. This will change how stories are told and how information is consumed across the globe.

The global implications of this technology reach far beyond Hollywood or advertising agencies. We are entering an era where the cost of creating high-quality visual propaganda is dropping to zero. In regions with low media literacy, a single convincing video can spark civil unrest or swing an election. This is not a theoretical threat. We have already seen synthetic clips used to impersonate political leaders and spread misinformation about global conflicts. The speed at which these videos can be produced means that fact-checkers are constantly playing catch-up. By the time a video is debunked, it has already been viewed millions of times. This creates a permanent state of skepticism where people stop believing even real footage. This “liar’s dividend” allows bad actors to dismiss genuine evidence of wrongdoing as just another AI fabrication. The erosion of shared reality is perhaps the most significant consequence of the progress we are seeing this month.

On the economic front, the impact is equally profound. Countries that rely on low-cost video production and animation services are facing a sudden shift in demand. If a company in New York can generate a high-quality product demo in minutes, they no longer need to outsource that work to a studio in another time zone. This could lead to a centralization of creative power in the hands of those who own the most powerful models. At the same time, it democratizes the ability to create. A filmmaker in a developing nation now has access to the same visual tools as a major studio. This could lead to a surge in diverse storytelling that was previously blocked by high entry costs. The global balance of creative influence is shifting. We are seeing a move away from physical infrastructure like soundstages and toward digital infrastructure like GPU clusters. This transition will redefine what it means to be a “creative” hub in the 21st century.

Moving Beyond the Static Frame

To understand the real-world impact, consider a day in the life of a creative director at a mid-sized agency. In the past, a client request for a new campaign meant weeks of storyboarding, casting, and location scouting. Today, the director starts their morning by typing descriptions into a generative engine. By lunch, they have ten different versions of a thirty-second spot. None of these versions required a camera or a crew. They can test these clips with focus groups immediately. If the feedback is negative, they can iterate and have new versions by the afternoon. This compressed timeline is the new reality of the industry. It allows for a level of experimentation that was previously impossible. However, it also puts immense pressure on the staff. The expectation is no longer just quality, but extreme volume and speed. The role of the human is shifting from a creator of images to a curator of possibilities. They must decide which of the thousand generated options actually fits the brand’s voice.

The consequences for the labor market are stark. Entry-level positions in the video industry, such as junior editors or motion graphics artists, are being automated first. These roles often involve the kind of repetitive tasks that AI handles best. For example, removing a background or matching the lighting between two shots can now be done in seconds. While this frees up senior creatives to focus on the big picture, it removes the “training ground” for the next generation of talent. Without these entry-level roles, it is unclear how young professionals will develop the skills needed to become directors or producers. We are seeing a hollowing out of the middle class in the creative arts. The gap between the independent creator using AI and the high-end director using a mix of tools is widening. This creates a new set of challenges for companies trying to build sustainable creative teams.

Have an AI story, tool, trend, or question you think we should cover? Send us your article idea — we’d love to hear it.The practical stakes are visible in how companies are restructuring their budgets. Money that used to go toward travel and equipment is now being diverted into cloud compute credits and prompt engineering training. A small team can now produce work that looks like it had a million-dollar budget. This is a massive advantage for startups and independent creators. They can compete with established brands on a visual level for the first time. However, this also leads to a crowded market. When everyone can produce high-quality video, the value of the video itself decreases. The premium moves from the image to the idea. The ability to tell a compelling story becomes the only way to stand out in a sea of perfect, AI-generated content.

BotNews.today uses AI tools to research, write, edit, and translate content. Our team reviews and supervises the process to keep the information useful, clear, and reliable.

- Production costs for short-form marketing content are expected to drop by over 70 percent.

- The time required for visual effects post-production is shrinking from months to days.

We must apply Socratic skepticism to this rapid advancement. What are the hidden costs of this “free” creativity? The first cost is environmental. Training and running these models requires a staggering amount of electricity and water for cooling data centers. As we generate more video, our carbon footprint grows. Is the ability to create a clip of a cat in a space suit worth the environmental toll? The second cost is the loss of the “human touch.” There is an intangible quality to a video shot on film by a human who made specific, flawed choices. AI video is often too perfect, leading to an “uncanny valley” effect that can feel soulless. If we move entirely to synthetic media, do we lose the ability to connect with each other on a visceral level? We must also ask who owns the “style” of these videos. If a model is trained on the work of thousands of uncompensated artists, is the output truly new, or is it a form of high-tech plagiarism?

Privacy is another major concern. If these models can generate a realistic video of anyone doing anything, the concept of “consent” disappears. We are already seeing the rise of deepfake pornography and non-consensual imagery. This is a systemic failure of the platforms that host this content. They are unable or unwilling to police the flood of synthetic media. We must ask if the benefits of generative video outweigh the potential for life-altering harm to individuals. Furthermore, what happens to our legal system? If video evidence can no longer be trusted, how do we prove a crime occurred? The foundations of our justice and information systems are built on the idea that seeing is believing. If we break that link, we may find ourselves in a world where truth is whatever the most powerful algorithm says it is. These are the difficult questions we must face as the technology continues to mature.

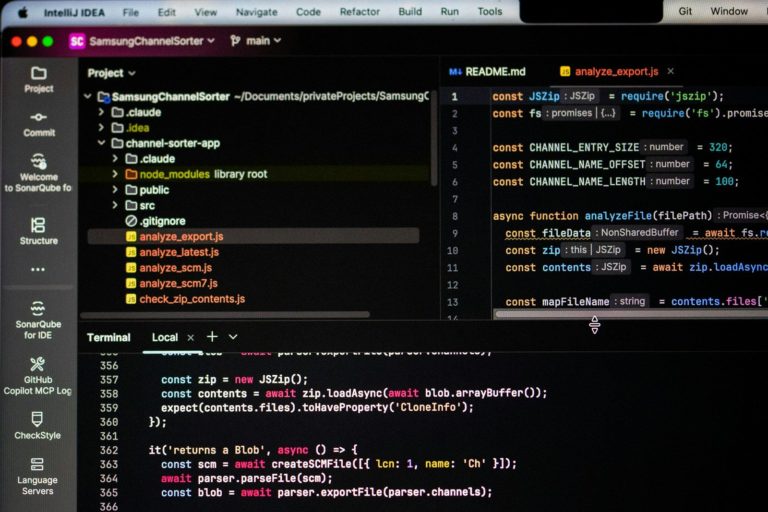

For the power users, the technical details are where the real progress is hidden. We are seeing a move toward local storage and execution of these models. While cloud-based APIs like those from OpenAI or Runway are popular, many creators are looking for ways to run these systems on their own hardware. This provides more control over the output and avoids the strict filters imposed by large corporations. However, the hardware requirements are steep. To generate high-definition video at a reasonable frame rate, you need a GPU with at least 24GB of VRAM. This limits the “local” revolution to those who can afford high-end workstations. We are also seeing the emergence of workflow integrations where AI video tools are plugged directly into software like Adobe Premiere or DaVinci Resolve. This allows for a hybrid approach where AI generates specific elements that are then refined by a human editor.

API limits remain a significant bottleneck for developers. Most providers charge per second of video generated, which can quickly become expensive for large-scale projects. There are also limits on the number of concurrent requests, making it difficult to build real-time applications. The next year will likely see a push for more efficient models that can run on consumer-grade hardware. We are already seeing the first steps in this direction with “distilled” versions of popular models. These smaller versions sacrifice some detail for a massive increase in speed. For the geek community, the focus is on fine-tuning. By training a small layer on top of a base model, a creator can teach the AI to recognize a specific character or art style. This level of customization is what will move AI video from a gimmick to a professional tool. It allows for the kind of consistency required for long-form storytelling.

- Current API latencies for high-quality video generation range from 30 to 60 seconds per clip.

- Local storage for model weights can exceed 100GB for the most advanced open-source versions.

Editor’s note: We created this site as a multilingual AI news and guides hub for people who are not computer geeks, but still want to understand artificial intelligence, use it with more confidence, and follow the future that is already arriving.

The bottom line is that the videos we see this month are evidence of a fundamental shift in the nature of media. We are moving away from a world of capture and toward a world of synthesis. This is not just a change in tools, but a change in how we relate to reality. The signal to follow is the integration of these tools into everyday life. When you can no longer tell if a video was shot on an iPhone or generated in a cloud, the technology has won. Meaningful progress in will not be a more realistic clip of a dragon. It will be the development of tools that allow for precise, frame-by-frame control. It will be the creation of robust watermarking systems that can survive compression and editing. Most importantly, it will be the establishment of new social norms and laws that protect individuals from the misuse of this power. The videos are just the beginning of the story for .

Found an error or something that needs to be corrected? Let us know.