How Copyright Fights Could Change AI Products

The End of the Free Data Era

The era of consequence-free data collection is over. For years, developers built large language models on the assumption that the open internet was a public resource. This assumption is now meeting the reality of the courtroom. High-profile lawsuits from news organizations and artists are forcing a fundamental shift in how these products are built and sold. Companies can no longer ignore the origin of their training sets. The result is a move toward a licensed model where every token has a price tag. This shift will determine which companies survive and which ones collapse under the weight of legal fees. It is not just about ethics or the rights of creators. It is a matter of business sustainability. If the courts decide that training on copyrighted data is not fair use, the cost of building a competitive model will skyrocket. This will favor the tech giants who already have deep pockets and existing licensing deals. Smaller players may find themselves priced out of the market entirely. The speed of development is hitting a legal wall that will reshape the industry for years to come.

From Scraping to Licensing

At its core, the current conflict stems from how generative models learn. These systems ingest billions of words and images to identify patterns. In the early stages of development, researchers used massive datasets like Common Crawl without much concern for the individual rights attached to that data. They argued that the process was transformative, meaning it created something entirely new and did not replace the original work. This argument is the foundation of the fair use defense in the United States. However, the scale of current AI production has changed the equation. When a model can generate a news article in the style of a specific journalist or an image that mimics a living artist, the claim of transformation becomes harder to defend. This has led to a surge in litigation from content owners who see their livelihoods being used to train their eventual replacements.

Recent shifts show that the industry is moving away from the “ask for forgiveness” strategy. Large tech firms are now signing multi-million dollar deals with publishers to secure high-quality, legal data. This creates a two-tier system. On one side, you have “clean” models trained on licensed or public domain data. On the other, you have models built on scraped data that carry significant legal risk. The business world is starting to prefer the former. Companies do not want to integrate a tool that might be shut down by a court order or result in a massive copyright infringement bill. This has turned legal provenance into a key product feature. Knowing where the data came from is now just as important as what the model can do. This trend is visible in the recent actions of companies like OpenAI and Apple, which have sought partnerships with major media conglomerates to ensure their training pipelines remain uninterrupted by court injunctions.

A Fragmented Global Legal Map

The legal battle is not confined to one country. It is a global struggle with different regions taking wildly different approaches. In the European Union, the AI Act is setting strict standards for transparency. Developers must disclose exactly what copyrighted material they used for training. This is a significant hurdle for companies that have kept their training sets secret. According to a report by Reuters, these regulations aim to balance corporate power with individual rights, but they also add a heavy layer of compliance. In Japan, the government has taken a more developer-friendly stance, suggesting that training on data might not violate copyright laws in many cases. This creates a regulatory arbitrage where companies might move their operations to countries with more lenient rules, potentially leading to a geographic divide in AI capabilities.

The United States remains the primary battleground because most of the major AI companies are based there. The outcome of cases involving The New York Times and various authors will set the tone for the rest of the world. If US courts rule against the AI companies, it could trigger a wave of similar litigation globally. This uncertainty is a major drag on investment for some, while others see it as a chance to consolidate power. Large corporations with existing content libraries, such as film studios and stock photo agencies, are suddenly in a position of extreme leverage. They are no longer just content creators. They are the gatekeepers of the raw materials needed for the next generation of software. This shift is changing the power dynamics of the entire tech industry, moving influence away from pure software engineers and toward those who own the rights to human expression. This evolution is central to the ongoing discussion about AI governance and ethics in the modern age.

The New Cost of Doing Business

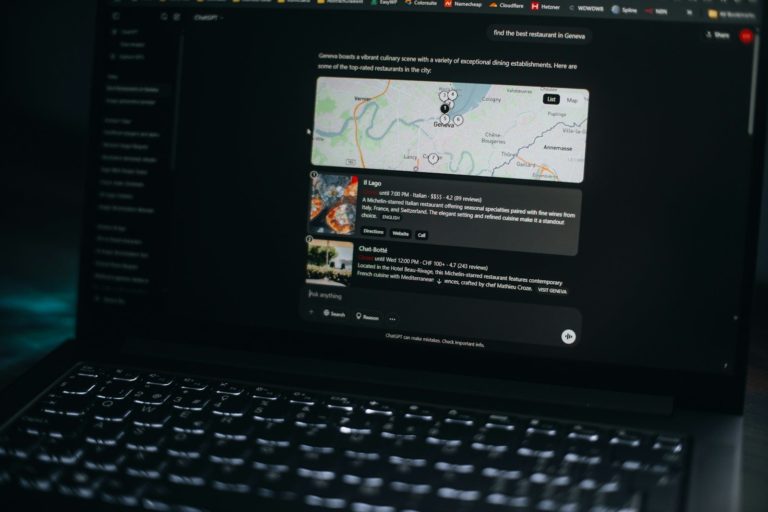

The practical impact of these legal fights is already visible in corporate boardrooms. Consider a typical day for a product manager at a mid-sized tech firm in 2026. Their task is to launch a new automated marketing tool. A few years ago, they would have simply plugged into a popular API and started shipping. Today, they must spend hours with the legal team reviewing the terms of service for that API. They need to know if the model was trained on “safe” data and if the provider offers indemnification. This means the provider promises to pay for any legal costs if a customer is sued for copyright infringement. This is a massive shift in how software is sold. The focus has moved from pure performance to legal safety. If a tool cannot guarantee its data sources, it is often rejected by risk-averse enterprise clients.

Imagine a graphic designer using an AI tool to create a campaign for a global brand. They generate an image, but it looks suspiciously like the work of a famous photographer. If the brand uses that image, they could face a lawsuit. To avoid this, companies are now implementing “human-in-the-loop” workflows where every AI output is checked against copyright databases. This adds a layer of friction that many did not anticipate. It slows down the speed of production, which was the main selling point of AI in the first place. The business consequences of legal uncertainty are clear. It leads to higher insurance premiums, slower product cycles, and a constant fear of litigation. Companies are now forced to allocate significant portions of their budget to legal defense and licensing fees rather than research and development.

Have an AI story, tool, trend, or question you think we should cover? Send us your article idea — we’d love to hear it.People often overestimate how quickly these legal issues will be resolved. They think a single court case will settle everything. In reality, this will likely be a decade-long process of appeals and legislative tweaks. At teh same time, people underestimate the technical difficulty of removing copyrighted data from a model once it has already been trained. You cannot just “delete” a specific book or article from a neural network. Often, the only way to comply with a removal order is to delete the entire model and start over from scratch. This is a catastrophic risk for any business. It means that a single legal loss could wipe out years of work and millions of dollars in investment. This reality is forcing developers to be much more selective about what they include in their training sets from the very beginning.

The High Price of Permission

What is the true cost of a “clean” model? If only the largest companies can afford to license the entire history of human thought, do we end up with a monopoly on intelligence? We must ask if the protection of individual creators will inadvertently destroy the competition that keeps the tech industry healthy. There is also the question of privacy. If companies move away from public web scraping and toward private data sets, will they start using our personal emails and private documents to train their models? The hidden cost of “legal” AI might be a further erosion of our digital privacy as companies look for every possible source of data that they can legally own. This shift could create a world where our personal information becomes the most valuable training data available.

We should also consider who actually benefits from these licensing deals. Is the money going to the individual writers and artists, or is it being swallowed up by large publishing conglomerates? If the goal of copyright is to encourage creativity, we must ask if these new deals actually achieve that. Or do they simply create a new revenue stream for corporate entities while the actual creators remain underpaid?

BotNews.today uses AI tools to research, write, edit, and translate content. Our team reviews and supervises the process to keep the information useful, clear, and reliable.

Technical Workarounds and Data Gaps

For power users and developers, the shift toward licensed data is changing the technical stack. One of the most significant trends is the move toward Retrieval-Augmented Generation or RAG. Instead of trying to bake all knowledge into the model’s weights during training, RAG allows a system to look up information in a private, licensed database in real-time. This bypasses many copyright issues because the model is not “learning” the data in a permanent way. It is simply reading it to answer a specific query. This makes local storage and efficient indexing more important than ever. Developers are spending more time building robust retrieval systems and less time on the training process itself. This architectural shift is a direct response to the legal pressures facing the industry.

However, RAG has its own limitations. It relies on the quality of the external database and the speed of the retrieval process. API limits are also a major factor. As data providers realize the value of their content, they are tightening their APIs. They are limiting how many requests a developer can make and what they can do with the data once they have it. This makes it harder to build high-performance applications that require constant access to fresh information. Developers are also looking at smaller, specialized models trained on narrow, high-quality datasets. These “small language models” are easier to audit and carry less legal risk. They can be hosted locally, which helps with privacy and reduces reliance on expensive third-party APIs. The geek community is currently focused on how to maintain model performance while shrinking the size of the training set. This requires more sophisticated data cleaning and a better understanding of which tokens actually contribute to the model’s intelligence. The technical challenge of 2026 is no longer just about scale, but about efficiency and legal compliance.

The Compliance Mandate

The bottom line is that the relationship between AI and copyright has entered a new, more mature phase. The wild west days of unrestricted scraping are over. Businesses must now prioritize legal compliance as much as technical performance. This will lead to more expensive AI products, but they will also be more stable and reliable for enterprise use. The tension between innovation and ownership will continue to define the industry for the foreseeable future. Companies that can find a way to respect creator rights while still pushing the boundaries of what is possible will be the ones that lead the next decade of tech. It is no longer enough to build a powerful tool. You must also prove that you have the right to build it. The future of AI is not just written in code, but in the contracts that govern the data behind it.

Editor’s note: We created this site as a multilingual AI news and guides hub for people who are not computer geeks, but still want to understand artificial intelligence, use it with more confidence, and follow the future that is already arriving.

Found an error or something that needs to be corrected? Let us know.