The Next Chatbot Battle: Search, Memory, Voice or Agents?

The era of the blue link is fading. Tech giants are now fighting for the very moment a user asks a question. This is not just a minor update to how we find information. It is a fundamental shift in the power dynamic between those who create content and those who aggregate it. For decades, the deal was simple. You provide the data, and the search engine provides the traffic. That contract is being rewritten in real time as chatbots move from being simple toys to comprehensive agents. We are seeing the rise of answer engines that do not want you to click away. They want to keep you within their own walls. This shift creates a massive pressure on the traditional web. **Visibility no longer guarantees a visit.** A brand might appear in an AI summary, but if the user gets what they need without leaving the chat, the creator gets nothing. This competition spans across voice interfaces, persistent memory, and autonomous agents. The winner will not necessarily be the smartest model. It will be the one that fits most seamlessly into the daily flow of human life.

Traditional search engines work like a massive library index. They point you to a shelf. Modern AI interfaces work like a research assistant who reads the books for you and gives you a summary. This distinction is critical for understanding the current tech shift. An answer engine uses large language models to synthesize information from across the web into a single response. This process relies on a technique called retrieval augmented generation. It allows the AI to look up current facts before generating a response. This reduces the chance of making things up while providing a conversational experience. However, this method changes how we perceive accuracy. When a search engine gives you ten links, you can check the sources yourself. When an AI gives you one answer, you are forced to trust its judgment. This is not just about search. It is about discovery. New patterns are emerging where users do not type keywords. They speak to their devices or let their agents monitor their emails to anticipate needs. These systems are becoming more proactive. They do not wait for a query. They offer suggestions based on context. This transition from reactive search to proactive assistance is the core of the current battle. Companies are racing to build ecosystems where your data stays in one place. If your chatbot remembers your last vacation, it can plan your next one better than a generic search engine ever could. This persistent memory is the new moat in the tech industry.

The Shift From Links to Direct Answers

The move toward closed AI ecosystems has profound effects on the global economy. Small publishers and independent creators are the first to feel the squeeze. When an AI overview provides a full recipe or a technical fix, the original website loses the ad revenue that keeps it alive. This is not a local problem. It affects every corner of the internet where information is exchanged. Many governments are now scrambling to update copyright laws to account for this. They are asking whether training a model on public data is fair use if that model then competes with the source. There is also a growing divide between those who can afford premium, private AI and those who rely on ad-supported, data-hungry free versions. This creates a new kind of digital inequality. In regions where mobile devices are the primary way to access the internet, voice interfaces are becoming the dominant mode of interaction. This bypasses the traditional web entirely. If a user in a developing market asks their phone for medical advice and gets a direct answer, they may never see the website that provided the raw data. This shifts the value from the content creator to the interface provider. Large corporations are also rethinking their internal data strategies. They want the benefits of AI without giving their proprietary secrets to a third party. This has led to a surge in demand for local models that run on private servers. The global tech map is being redrawn around who controls the data and who controls the gateway to that data.

How Answer Engines Process Your World

Imagine a typical morning in the year . You do not check a dozen apps to start your day. Instead, you speak to a device on your nightstand. It has already scanned your calendar, your emails, and the local weather. It tells you that your first meeting was moved back thirty minutes, so you have time for a longer walk. It also mentions that a product you were looking at is now on sale at a nearby store. This is the promise of the agentic web. It is a world where the interface disappears. You are no longer navigating a series of menus or scrolling through pages of search results. You are having a continuous conversation with a system that knows your preferences. In this scenario, the concept of visibility changes. For a local coffee shop, being the top result on a map is less important than being the one the AI agent recommends based on the user’s specific taste in beans. This creates a high stakes environment for businesses. They must optimize for AI discovery rather than traditional SEO. The difference between visibility and traffic becomes stark. A brand might be mentioned by an AI agent a thousand times a day, but if the agent handles the transaction directly, the brand never sees a single visitor on its website. This is already happening in the travel and hospitality sectors. AI agents can book flights, reserve tables, and organize itineraries without the user ever seeing a booking site.

The day in the life of a modern consumer is becoming more efficient but also more insulated. We are guided by algorithms that prioritize convenience over exploration. This raises questions about how we discover new things that fall outside our established patterns. If the AI only shows us what it thinks we want, we might lose the serendipity of the open web. Consider a researcher looking for a specific data point. In the old world, they might find a paper that leads to another paper and eventually to a new theory. In the AI world, they get the data point and stop. This efficiency is a double edged sword. It saves time but it might also narrow our perspective. For companies, the challenge is to stay relevant in a world where they are no longer the destination. They must become the data that the AI depends on. This means focusing on high quality, original content that cannot be easily replicated by a machine. The difference between visibility and traffic is now a matter of survival for many digital businesses. If you are visible in the AI summary but no one clicks your link, your business model must change. This is the new reality of the internet. It is a place where the answer is the product and the source is just a footnote. You can follow the latest updates on AI overviews to see how this is changing the web.

The Economic Ripple of the New Web

We must ask what we are giving up in exchange for this convenience. Is the loss of direct traffic to creators a price worth paying for faster answers? If the primary sources of infomation disappear because they are no longer profitable, what will the AI models train on in the future? We are potentially facing a feedback loop where AI models are trained on AI generated content, leading to a decline in overall quality. There is also the question of privacy. For an agent to be truly useful, it needs deep access to our personal lives. It needs to know our schedules, our relationships, and our preferences. Who owns this memory? If you switch from one provider to another, can you take your digital history with you? The current lack of interoperability suggests that tech giants are building new walled gardens. There is also the physical cost. Running massive language models for every simple search query requires an enormous amount of energy and water for cooling data centers. Is the environmental impact of a conversational search justified when a simple list of links would suffice? We must also consider the bias inherent in a single answer. When a search engine gives us a variety of perspectives, we can weigh them. When an AI provides a definitive summary, it hides the nuance and the conflict. Are we ready to outsource our critical thinking to a black box? These are not just technical challenges. They are fundamental questions about how we want our society to function in an automated age.

BotNews.today uses AI tools to research, write, edit, and translate content. Our team reviews and supervises the process to keep the information useful, clear, and reliable.

Living with a Digital Shadow

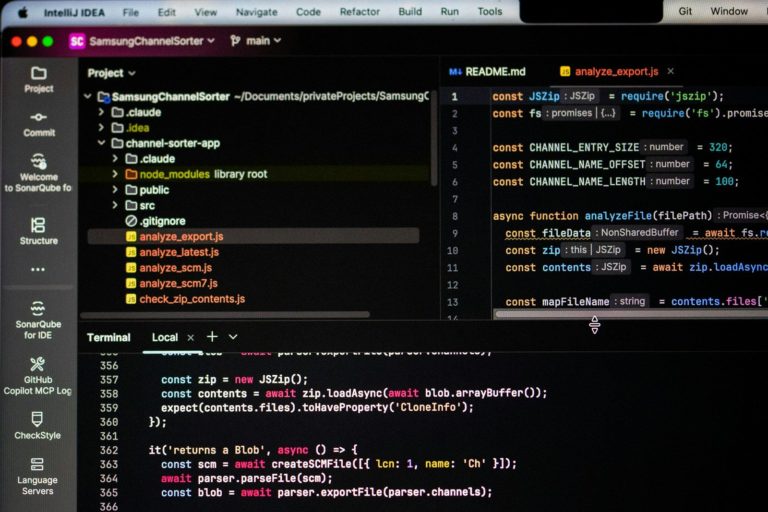

For the power user, the battle is about more than just the chat window. It is about the plumbing. Workflow integration is the next frontier. We are moving away from copy and paste toward deep API connections. A modern assistant needs to hook into tools like Slack, GitHub, and Notion to be truly effective. However, these integrations are often limited by strict API rate limits and token windows. Managing the context window is a constant struggle for developers. If a model forgets the start of a conversation, its utility as an agent drops to zero. This is why local storage and vector databases are becoming so important. By storing embeddings locally, an agent can quickly retrieve relevant information without sending everything to the cloud. This also addresses some privacy concerns. We are seeing a rise in small language models that can run on a high end laptop or even a phone. These models might not be as capable as the giants, but their low latency makes them better for real time voice interaction. Latency is the silent killer of AI adoption. If a voice assistant takes three seconds to respond, the illusion of a natural conversation is broken. Developers are also grappling with the challenge of tool use. Teaching a model to not just talk but to execute code or move files requires a high degree of reliability. One wrong command could delete a database or send a private email to the wrong person. You can read more about AI agents in professional settings to understand the risks involved.

Under the Hood of Agentic Workflows

The focus is shifting from raw parameter count to the precision of these actions. We are also seeing a move toward hybrid systems. These systems use a large model for complex reasoning and a smaller, faster model for simple tasks. This helps manage the high costs of compute while maintaining a responsive user experience. Developers are looking for ways to reduce the overhead of these calls. The use of prompt caching is one way to achieve this. It allows the system to remember the context of a conversation without reprocessing the entire history every time. This is essential for long running agents that might interact with a user over several days. Another key area of focus is the reliability of the output. For an agent to be useful in a professional setting, it cannot hallucinate. It must be able to verify its own work. This is leading to the development of self correcting models that check their answers against a set of known facts before presenting them to the user. The integration of these systems into existing enterprise software is the final hurdle. If an AI can accurately update a CRM or manage a project board, it becomes an indispensable part of the team. This is the level of integration that power users are demanding. They do not want another chat window. They want a tool that lives where they work and understands the specific context of their industry. Check the latest voice interface developments for more on this trend. You can also stay updated on the latest AI trends through our magazine.

What Progress Actually Looks Like

The next year will determine if chatbots become true partners or remain sophisticated search boxes. Meaningful progress will not be measured by higher benchmark scores. It will be measured by how well these systems handle complex, multi step tasks without human intervention. We should look for improvements in cross platform memory and the ability of agents to work together. The noise of new model releases often obscures the signal of actual utility. The real winners will be the ones who solve the friction of the user interface. Whether through voice, wearable tech, or seamless browser integration, *the goal is to make the technology disappear.* As the line between search and action blurs, the way we interact with the digital world will never be the same.

Editor’s note: We created this site as a multilingual AI news and guides hub for people who are not computer geeks, but still want to understand artificial intelligence, use it with more confidence, and follow the future that is already arriving.

Found an error or something that needs to be corrected? Let us know.