Open vs Closed AI: What Ordinary Users Need to Know

The Great Wall of Intelligence

The artificial intelligence industry is currently splitting into two distinct camps. On one side, companies like OpenAI and Google build massive, proprietary systems that live behind a digital wall. You access these tools through a website or an app, but you never see how they work. On the other side, a growing community of developers and companies like Meta and Mistral are releasing their models for anyone to download. This divide is not just a technical debate. it is a fundamental struggle over who controls the future of human knowledge and how much you have to pay to access it. For the average person, the choice between open and closed systems determines your privacy, your costs, and your creative freedom. If you use a closed model, you are a tenant. If you use an open model, you are an owner. Each path has trade-offs that most people ignore until something goes wrong with their data or their subscription.

The Truth Behind the Open Label

Marketing teams love to use the word open because it implies transparency and community. However, in the world of AI, the term is often used loosely. True open source software allows anyone to see the code, modify it, and share it. In AI, this would mean having access to the training data, the training code, and the final model weights. Very few major models actually meet this high bar. Most of what the public calls open AI is actually open weights. This means the company gives you the final brain of the model, but they do not tell you exactly how they built it or what specific books and websites they used to train it. It is like a bakery giving you a finished cake and the temperature of the oven, but refusing to share the exact brand of flour or the source of the eggs.

Closed AI is much simpler to define. It is a product. When you use GPT-4 or Claude 3, you are interacting with a service. You cannot download the model to your laptop. You cannot see the internal filters that prevent it from answering certain questions. You have no way of knowing if the company changed the model overnight to make it faster but less intelligent. This lack of transparency is the price of convenience. Companies argue that keeping models closed prevents bad actors from using the tech for harm. Critics argue it is simply a way to protect a monopoly. Understanding this distinction is vital because it changes how you should trust the output of the machine.

Sovereignty in the Age of Silicon

The global impact of this divide is massive. For countries outside the United States, relying on closed AI models means sending sensitive national data to servers in California or Virginia. This creates a massive dependency on a few American corporations. Open weights models allow a government in Europe or a startup in India to run the AI on their own local hardware. This provides sovereignty that closed systems can never offer. It allows for the creation of models that understand local languages and cultural nuances that a Silicon Valley giant might ignore. When a model is open, a developer in a small village has the same starting point as a researcher at a multi-billion dollar firm. This levels the playing field in a way that few technologies ever have.

Enterprises also face a tough choice. A bank cannot risk sending private customer financial records to a third party cloud. For them, an open model that runs inside their own secure data center is the only viable option. Meanwhile, a small marketing agency might prefer the polished, high performance of a closed model because they do not have the staff to manage their own servers. The global economy is currently sorting itself into these two buckets. Those who prioritize control and those who prioritize speed. As we move through , the gap between these two groups will only grow. The winners will be those who recognize that AI is not a one size fits all utility but a strategic asset that requires a specific type of ownership.

Privacy in the Local Sandbox

To understand the practical stakes, consider a day in the life of a medical researcher named Elena. She is working on a new study involving patient records. If she uses a popular closed AI tool, she has to strip all identifying information from her notes before she can ask the AI to summarize them. Even then, she is never quite sure if her data is being used to train the next version of the model. She is constantly worried about a data breach at the AI company. This friction slows her down and limits what she can achieve. The convenience of the cloud comes with a persistent undercurrent of anxiety.

Now, imagine Elena switches to an open weights model running on a powerful workstation in her office. She can feed the AI every single detail of her research without any fear. The data never leaves the room. She can fine tune the model to understand specific medical terminology that the general cloud models often get wrong. She has total control over teh version of the AI she is using. If a software update makes the model worse at medical analysis, she simply stays on the older version. This is the power of local AI. It turns the tool into a private assistant that works for her and only her. While teh setup was harder, the long term utility is much higher because she is not limited by corporate safety filters or privacy policies.

BotNews.today uses AI tools to research, write, edit, and translate content. Our team reviews and supervises the process to keep the information useful, clear, and reliable.

Ordinary users often overestimate how hard it is to run these models. They think you need a room full of servers. In reality, many open models now run on modern laptops. Conversely, people underestimate how much control they lose with closed systems. They assume the service will always be there and will always be cheap. History shows that once a company has you locked into their ecosystem, prices go up and features can disappear. By choosing an open path, you are protecting yourself against future corporate decisions that might not align with your interests. You are choosing a tool that stays in your digital toolbox forever.

The Uncomfortable Questions of Control

We must ask difficult questions about the hidden costs of these systems. If a model is closed, who is auditing it for bias? We are forced to trust the marketing materials of the company. If the AI refuses to answer a question about a political event, is that for safety or for corporate image protection? The lack of transparency makes it impossible to know. On the other hand, open models present their own risks. If anyone can download a powerful AI, what stops them from using it to create disinformation or malware? The open community argues that the best defense is more open models, but this is a theory that has not yet been fully tested in a crisis.

There is also the question of energy and hardware. Running your own AI is not free. It consumes significant electricity and requires expensive graphics cards. Are we trading corporate dependence for hardware dependence? Furthermore, the datasets used for these models are often scraped from the internet without the consent of the original creators. While closed companies hide their data sources, open weight companies are often just as vague. We must ask if any AI can truly be called open if the foundation it was built on is a secret. We are currently building the infrastructure of the future on a very shaky ethical foundation. As we approach , the pressure for real transparency will only increase.

Under the Hood for the Technical Elite

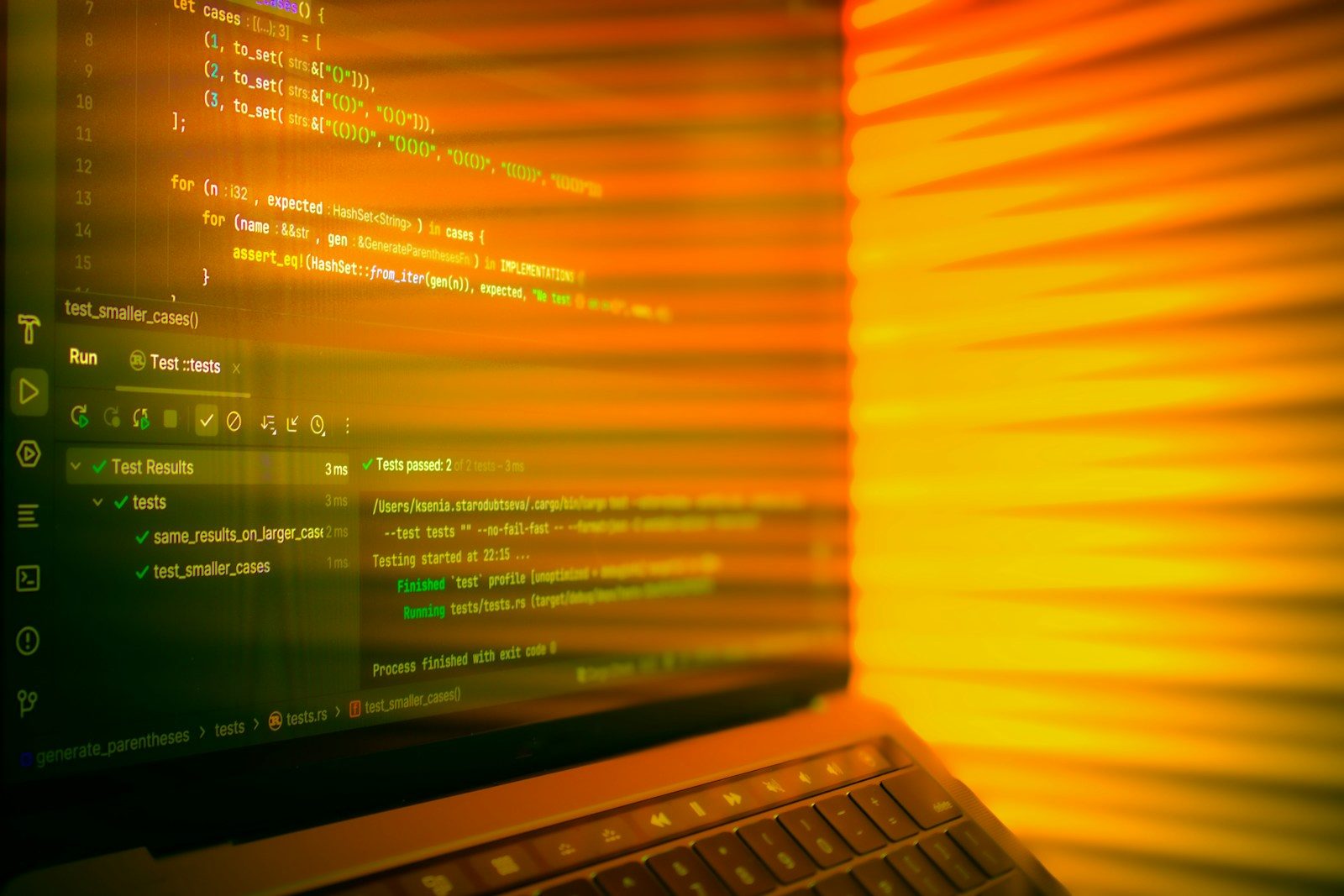

For those who want to move beyond the chat interface, the technical differences are stark. Closed AI providers offer APIs that charge you per word or per image. These costs can spiral quickly as you scale a project. You are also at the mercy of their rate limits. If their servers are busy, your application slows down. You have no control over the latency or the uptime. You are essentially building your business on rented land. If the provider decides to ban your use case, your entire project could vanish in an afternoon. This is a significant risk for developers who want to build long term value.

Open models offer a different workflow. You can use techniques like *quantization* to shrink a massive model so it fits on cheaper hardware. This allows you to run a 70 billion parameter model on a single high end consumer GPU. You can also use local storage for your model weights, ensuring that your application works even without an internet connection. There are no API limits and no per token costs after you buy the hardware. Integration is also more flexible. You can modify the internal layers of the model to better suit your specific task. This level of customization is impossible with a closed API. While the initial engineering hurdle is higher, the freedom to innovate without permission is a massive advantage for power users.

Have an AI story, tool, trend, or question you think we should cover? Send us your article idea — we’d love to hear it.Choosing Your Path Forward

The decision between open and closed AI depends on your specific needs. If you want the most powerful, polished experience and do not care about privacy or long term costs, closed models like GPT-4 are the clear choice. They are the Ferraris of the AI world. They are fast, sleek, and maintained by someone else. However, if you value privacy, want to avoid recurring fees, or need to build a system that you truly own, open weights models are the way to go. They require more effort to set up, but they offer a level of security and flexibility that no subscription service can match. The evolving AI industry standards suggest that the future will be a hybrid of both. Use the closed models for quick tasks and the open models for your most important, private work. In this new era, the most important skill is knowing which tool to use for which job.

Editor’s note: We created this site as a multilingual AI news and guides hub for people who are not computer geeks, but still want to understand artificial intelligence, use it with more confidence, and follow the future that is already arriving.

Found an error or something that needs to be corrected? Let us know.