From Expert Systems to ChatGPT: The Fast Road to 2026

The trajectory of artificial intelligence is often viewed as a sudden explosion, but the path to 2026 was paved decades ago. We are currently moving away from the era of static software and into a period where probability dictates our digital interactions. This shift represents a fundamental change in how computers process human intent. Early systems relied on human experts to hard-code every possible rule, a process that was both slow and fragile. Today, we use large language models that learn patterns from vast datasets, allowing for a level of flexibility that was previously impossible. This transition is not just about smarter chatbots. It is about a complete overhaul of the global productivity stack. As we look toward the next two years, the focus is shifting from simple text generation to complex **agentic workflows**. These systems will not just answer questions but will perform multi-step tasks across different platforms. The winners in this space are not necessarily those with the best math, but those with the best distribution and user trust. Understanding this evolution is essential for anyone trying to predict the next wave of technical disruption.

The Long Arc of Machine Logic

To understand where we are going, we must look at the transition from expert systems to neural networks. In the 1980s, AI meant “Expert Systems.” These were massive databases of “if-then” statements. If a patient has a fever and a cough, then check for a specific infection. While logical, these systems could not handle nuance or data that fell outside their pre-defined rules. They were brittle. If the world changed, the code had to be rewritten by hand. This led to a period of stagnation where the technology could not live up to its own hype. The logic of that era still influences how we think about computer reliability today, even as we move into more fluid models.

The modern era is defined by the transformer architecture, a concept introduced in a 2017 research paper. This changed the goal from teaching a computer rules to teaching a computer to predict the next part of a sequence. Instead of being told what a chair is, the model looks at millions of images and descriptions of chairs until it understands the statistical essence of a chair. This is the core of ChatGPT and its rivals. These models do not “know” facts in the way humans do. They calculate the most likely next word based on the context of the previous words. This distinction is vital. It explains why a model can write a beautiful poem but fail at a simple math problem. One is a pattern of language, while the other requires the rigid logic that we actually stripped away to make these models work. The current era is a marriage of massive compute power and massive data, creating a tool that feels human but operates on pure math.

The Infrastructure of Global Dominance

The global impact of this technology is tied directly to distribution. A superior model developed in a vacuum has little value compared to a slightly worse model integrated into a billion office suites. This is why the partnership between Microsoft and OpenAI changed the industry so quickly. By placing AI tools directly into the software that the world already uses, they bypassed the need for users to learn new habits. This distribution advantage creates a feedback loop. More users provide more data, which leads to better refinement and more product familiarity. By the middle of , the shift toward integrated AI will be nearly universal across all major software platforms.

This dominance has significant implications for global labor markets. We are seeing a shift where the “middle-management” of digital tasks is being automated. In countries that rely heavily on outsourced technical support or basic coding, the pressure to move up the value chain is intense. But this is not a one-sided story of job loss. It is also about the democratization of high-level skills. A person with no formal training in Python can now generate functional scripts to analyze local business data. A comprehensive artificial intelligence analysis shows that this levels the playing field for small enterprises in developing economies that previously could not afford a dedicated data science team. The geopolitical stakes are also rising as nations compete for the hardware needed to run these models. According to Stanford HAI, the control of high-end chips has become as important as the control of energy resources. This competition will define the economic boundaries of the next decade.

Living with the New Intelligence

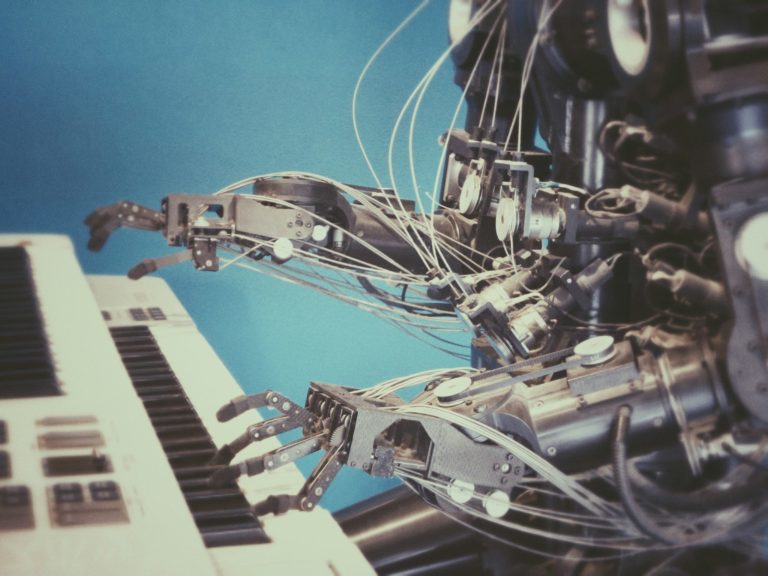

Consider a day in the life of a project coordinator in 2026. Her morning does not start with checking a hundred separate emails. Instead, an AI agent has already summarized the overnight communications from three different time zones. It has flagged a shipping delay in Singapore and drafted three potential solutions based on previous contract terms. She does not spend her time typing. Instead, she spends her time reviewing and approving the choices made by teh system. This is the shift from being a creator to being an editor. The turning point for this was the realization that AI should not be a destination website but a background service. It is now woven into the fabric of daily work without requiring a specific login or a separate tab.

In the creative industries, the impact is even more visible. A marketing team can now produce a high-quality video campaign in hours rather than weeks. They use a model to generate the script, another to create the voiceover, and a third to animate the visuals. The cost of failure has dropped to nearly zero, allowing for constant experimentation. But this creates a new problem: a glut of content. When everyone can produce “perfect” material, the value of that material drops. The real-world impact is a shift toward authenticity and human-verified information. Research from Nature suggests that people are beginning to crave the imperfections that signal a human was involved. This desire for the “human touch” will likely become a premium market segment as synthetic content becomes the default.

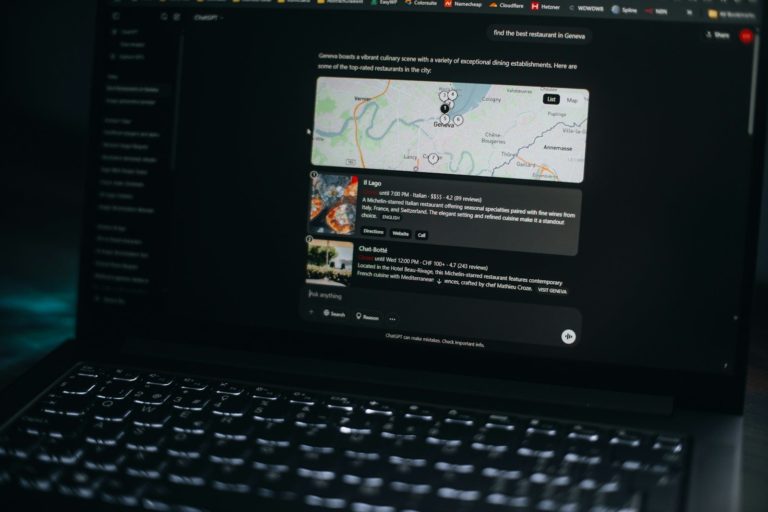

There is a common confusion that these models are “thinking” or “reasoning.” In reality, they are performing high-speed retrieval and synthesis. When a user asks a model to plan a travel itinerary, the model is not looking at a map. It is recalling patterns of how travel itineraries are usually structured. This distinction matters when things go wrong. If the model suggests a flight that does not exist, it is not lying. It is simply providing a statistically likely but factually incorrect string of characters. This divergence between public perception and reality is where most corporate risks live. Companies that trust these systems to handle legal or medical data without human oversight are finding that the “hallucination” problem is not a bug that can be easily fixed. It is a fundamental part of how the technology works.

BotNews.today uses AI tools to research, write, edit, and translate content. Our team reviews and supervises the process to keep the information useful, clear, and reliable.

Hard Questions for a Synthetic Future

As we integrate these systems deeper into our lives, we must ask: what are the hidden costs of this convenience? Every query sent to a large model requires a significant amount of electricity and water for cooling data centers. If a simple search query now consumes ten times the energy it did five years ago, is the marginal improvement in the answer worth the environmental toll? We must also consider the privacy of the data used for training. Most of the models we use today were built by scraping the open internet without the explicit consent of the creators. Does the public good of a powerful AI outweigh the individual rights of the artists and writers whose work made it possible?

Another difficult question involves the “black box” nature of neural networks. If an AI makes a decision to deny a loan or a medical treatment, and the developers themselves cannot explain exactly why the model reached that conclusion, can we ever truly call the system fair? We are trading transparency for performance. Is this a trade we are willing to make in our legal and judicial systems? We also have to look at the centralization of power. If only a handful of companies can afford the billions of dollars required to train these models, what happens to the concept of a free and open internet? We may be moving toward a future where “truth” is whatever the most powerful model says it is. These are not technical problems to be solved with more code. They are philosophical and societal challenges that require human intervention. As noted by the MIT Technology Review, the policy decisions we make now will determine the power balance of the next fifty years.

Under the Hood of the Modern Stack

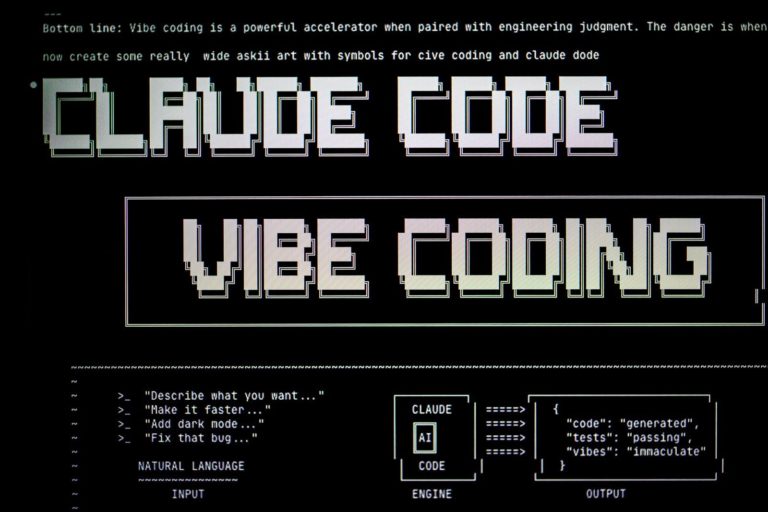

For the power user, the focus has moved beyond the chat interface and into the territory of local execution and API orchestration. While the cloud-based models offer the most raw power, the rise of local storage and execution is the real story for 2026. Tools like Ollama and Llama.cpp allow users to run smaller, highly capable models on their own hardware. This solves the privacy issue and removes the latency of a round-trip to a server. The geek section of the market is currently obsessed with **quantization**, which is the process of shrinking a model so it fits on a standard consumer GPU without losing too much intelligence.

Workflow integration is now handled through sophisticated RAG (Retrieval-Augmented Generation) pipelines. Instead of sending all your data to the model, you store your documents in a vector database. When you ask a question, the system finds the relevant snippets of your data and feeds only those to the model as context. This bypasses the strict context window limits that still plague many systems. API limits remain a bottleneck for high-volume applications, leading many developers to implement “model routing.” This is a strategy where a cheap, fast model handles easy queries, and only the difficult questions are sent to the expensive, high-end models. This approach reduces costs and manages latency more effectively than relying on a single provider. We are also seeing a move toward “small language models” that are trained on specific, high-quality datasets rather than the entire internet. These models often outperform their larger cousins on specialized tasks like coding or legal analysis while requiring a fraction of the compute power. The ability to swap these models in and out of a workflow is becoming a standard requirement for modern software architecture.

Have an AI story, tool, trend, or question you think we should cover? Send us your article idea — we’d love to hear it.The Next Horizon

The road to 2026 is not a straight line of progress but a series of trade-offs. We have gained incredible speed and flexibility at the cost of transparency and predictability. The distribution advantage of the tech giants has made AI a ubiquitous part of daily life, yet the underlying reality of how these models function remains misunderstood by the general public. Looking ahead to , the focus will shift from making models larger to making them more efficient and autonomous. The most successful individuals and companies will be those who treat AI as a powerful but fallible partner rather than an all-knowing oracle. The live question that remains is whether we can build a system that possesses the reasoning of the old expert systems and the linguistic fluidity of modern neural networks. Until then, the human in the loop remains the most important part of the equation.

Editor’s note: We created this site as a multilingual AI news and guides hub for people who are not computer geeks, but still want to understand artificial intelligence, use it with more confidence, and follow the future that is already arriving.

Found an error or something that needs to be corrected? Let us know.